Cloaking in SEO and How It Affects Ranking

The cloaking, also known as stealth, is a method used to raise a website’s search engine rank. Find out more in the article.

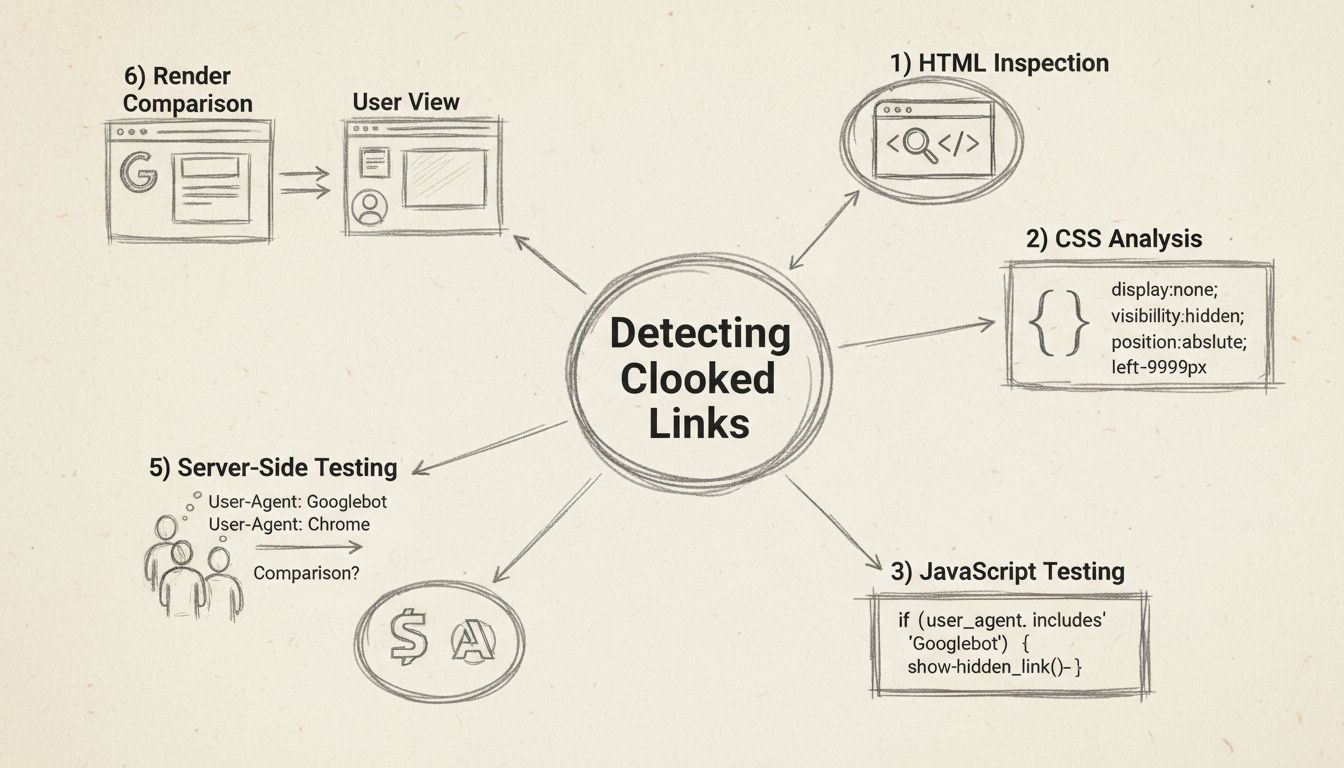

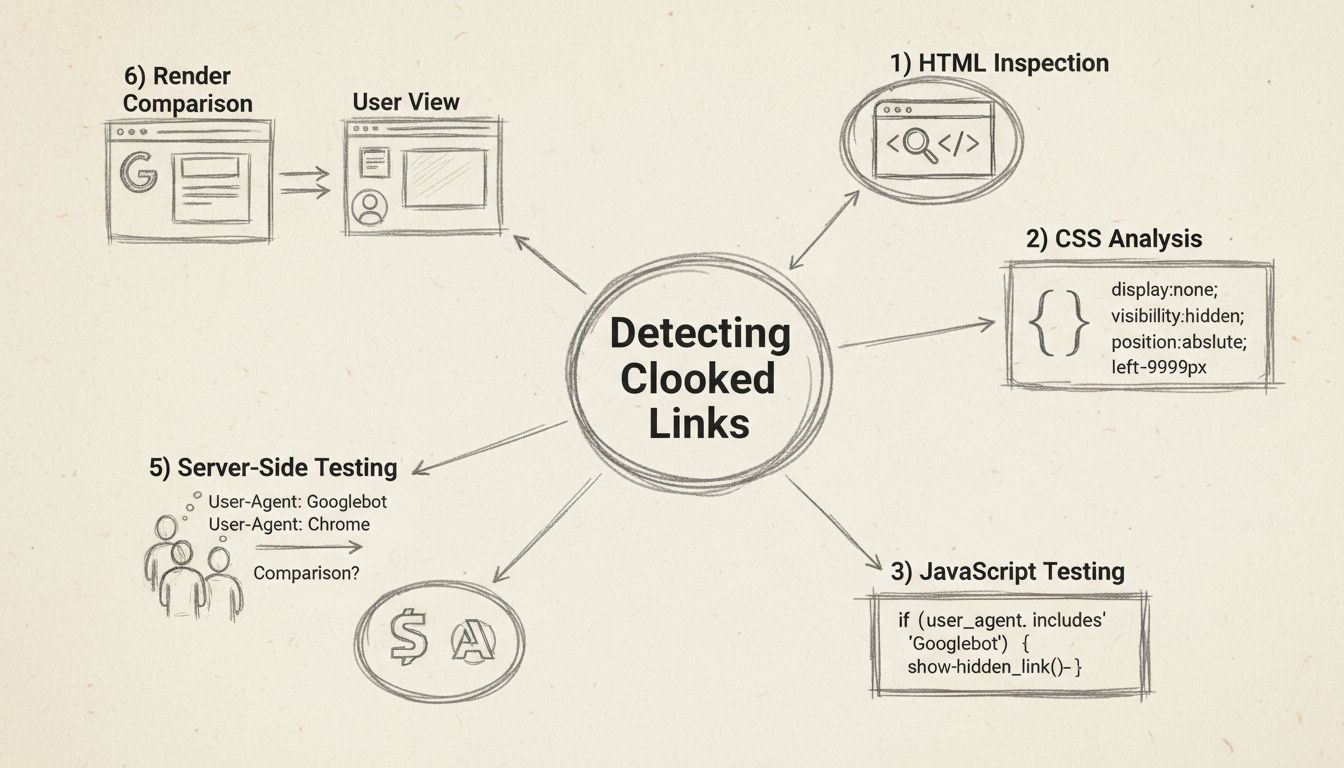

Learn proven methods to detect cloaked links including HTML inspection, CSS analysis, JavaScript testing, and SEO tools. Comprehensive guide to finding hidden links on websites.

You can find cloaked links by examining website source code, using browser developer tools to inspect hidden CSS properties, disabling JavaScript to reveal hidden content, comparing how Google renders pages versus user views, testing with different user-agents, and using professional SEO tools like Semrush, Ahrefs, and Screaming Frog that detect hidden or disguised links.

Cloaked links are URLs that hide their true destination or content from users while potentially showing different information to search engines or other visitors. Finding and identifying cloaked links is essential for website security, SEO compliance, and protecting your affiliate program from fraudulent practices. Whether you’re auditing your own website, investigating suspicious activity, or ensuring compliance with search engine guidelines, understanding how to detect cloaked links is a critical skill in 2025.

The most fundamental approach to finding cloaked links involves examining the raw HTML source code of a webpage. When you view the page source, you’re seeing the actual code that the server sends to your browser, which often reveals hidden elements that aren’t visible in the rendered page. This method is particularly effective because cloaked links must exist somewhere in the HTML structure, even if they’re hidden through CSS or JavaScript styling.

To inspect the HTML source, right-click on any webpage and select “View Page Source” or press Ctrl+U (Windows) or Cmd+U (Mac). This opens the raw HTML in a new tab where you can search for suspicious patterns. Look for anchor tags (<a> elements) that contain href attributes pointing to unexpected destinations. Many cloaked links are hidden using inline styles or CSS classes that apply visibility-hiding properties. Search for common patterns like display:none, visibility:hidden, opacity:0, or position:absolute combined with extreme negative coordinates like left:-9999px.

Browser Developer Tools provide an even more powerful inspection capability. Press F12 to open the Developer Tools, then use the Elements or Inspector tab to examine individual elements on the page. You can hover over elements to see their computed styles, which reveals whether CSS properties are hiding content from view. The DevTools also shows you the DOM tree structure, allowing you to see all elements including those that aren’t rendered visually. This is particularly useful for identifying links that are technically present in the HTML but completely invisible to users through CSS manipulation.

CSS cloaking is one of the most common techniques used to hide links from users while keeping them accessible to search engines. Understanding the specific CSS properties used for cloaking helps you identify suspicious patterns quickly. The most prevalent CSS cloaking techniques include white text on white backgrounds, off-screen positioning, zero-dimension elements, and opacity manipulation.

White text on white background cloaking involves setting the text color to match the background color, making content invisible to users but still present in the HTML. You can detect this by selecting all text on the page (Ctrl+A) and checking if any text becomes visible that wasn’t before. Off-screen positioning uses CSS properties like position: absolute; left: -9999px; to move content far outside the visible viewport. Zero-dimension cloaking sets width: 0; height: 0; or font-size: 0; to collapse elements to invisible sizes. Opacity manipulation uses opacity: 0; to make elements completely transparent while maintaining their presence in the DOM.

To systematically detect these techniques, use your browser’s DevTools to inspect suspicious elements and check their computed styles. Look for any combination of positioning, sizing, or color properties that would render content invisible. Many legitimate websites use similar techniques for accessibility purposes (like hiding skip-to-content links), so context matters. However, when these techniques are combined with keyword-stuffed text or links to unrelated domains, they indicate cloaking. PostAffiliatePro’s transparent approach to link management ensures all your affiliate links are legitimate and compliant with search engine guidelines, avoiding these deceptive practices entirely.

JavaScript-based cloaking is increasingly sophisticated, as it can dynamically inject hidden links or content after the page loads. This type of cloaking is particularly challenging to detect because the hidden content doesn’t appear in the initial HTML source code. To identify JavaScript-based cloaking, you need to compare what the page looks like with JavaScript enabled versus disabled.

Disable JavaScript in your browser settings and reload the page to see what content is hidden by JavaScript. Most browsers allow you to disable JavaScript through developer settings or extensions. If significant content or links appear when JavaScript is disabled that weren’t visible before, this indicates JavaScript-based cloaking. Additionally, examine the JavaScript code itself by checking the Sources tab in DevTools. Look for code that detects the user-agent (the identifier that tells websites what browser or bot is accessing the page) and serves different content based on that detection.

Common JavaScript cloaking patterns include checking for Googlebot user-agents and serving different content to search engines, detecting mobile versus desktop browsers and showing different pages, or using referrer-based detection to show different content based on where the user came from. You can search the page source for suspicious JavaScript patterns like navigator.userAgent, User-Agent, or conditional statements that check for bot identifiers. The Network tab in DevTools also shows all requests made by the page, revealing if additional resources are loaded conditionally based on user-agent or other factors.

One of the most effective ways to detect cloaking is comparing how a page appears to users versus how search engines see it. Google provides official tools for this purpose, making it straightforward to identify discrepancies. The Google Search Console URL Inspection Tool shows exactly how Googlebot renders and indexes a specific URL, allowing you to compare it with what you see as a regular user.

To use this method, open Google Search Console, navigate to the URL Inspection tool, and enter the URL you want to check. Google will show you the rendered HTML that Googlebot sees, including all JavaScript-rendered content. Compare this with what you see when visiting the page normally. If the rendered version shows different content, links, or metadata than what users see, this indicates cloaking. Look specifically for differences in page titles, meta descriptions, heading content, and the number of links present.

The Google Rich Results Test provides another perspective, showing how Google’s structured data parser interprets your page. This is useful for detecting cloaking in schema markup or structured data. Additionally, you can use browser DevTools to compare the initial HTML (View Source) with the rendered DOM (Elements tab after JavaScript execution). Right-click and select “View Page Source” to see the raw HTML, then open DevTools and inspect the Elements tab to see the final rendered state. Significant differences between these two views indicate JavaScript-based content injection or cloaking.

Server-side cloaking involves the web server itself serving different content based on the characteristics of the request, particularly the user-agent header. This is one of the most deceptive cloaking techniques because it happens before the browser even receives the content. To detect server-side cloaking, you need to make requests to the same URL with different user-agents and compare the responses.

Use command-line tools like cURL or Postman to test different user-agents. For example, you can make a request with a regular browser user-agent and compare it to a request with the Googlebot user-agent. The commands would look like:

curl -H "User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64)" https://example.com

curl -H "User-Agent: Mozilla/5.0 (compatible; Googlebot/2.1)" https://example.com

Compare the response sizes, content, HTTP status codes, and metadata between these requests. If the responses differ significantly, the server is likely serving different content based on user-agent, which is a form of cloaking. Additionally, check for sneaky redirects that only occur for certain user-agents. Some sites redirect mobile users to spam domains while showing legitimate content to desktop users, or redirect search engine users to legitimate content while redirecting regular users elsewhere.

Analyze your server logs to identify patterns of different content being served to different user-agents. Look for requests from Googlebot that receive different responses than identical requests from regular browsers. Verify that requests claiming to be from Googlebot actually come from Google’s IP ranges using reverse DNS lookup. Don’t rely solely on the user-agent header, as it can be easily spoofed. Cross-reference the source IP address with Google’s published IP ranges to confirm legitimate Googlebot requests.

| Tool Name | Type | Primary Function | Best For |

|---|---|---|---|

| Google Search Console | Official | URL Inspection & Rendering Analysis | Comparing Google’s view vs. user view |

| Google Rich Results Test | Official | Structured Data Validation | Detecting cloaked schema markup |

| Screaming Frog SEO Spider | Third-party | HTML Crawling & Comparison | Comparing rendered vs. source HTML |

| Semrush Site Audit | Third-party | Content Analysis & Cloaking Detection | Identifying hidden content patterns |

| Ahrefs Site Audit | Third-party | Content Discrepancy Analysis | Finding content differences |

| Browser DevTools | Built-in | HTML/CSS/JavaScript Inspection | Manual element inspection |

| cURL/Postman | Command-line | User-Agent Testing | Server-side cloaking detection |

| Lighthouse | Built-in | Page Quality Audit | Rendering and performance issues |

Professional SEO tools like Semrush and Ahrefs have built-in cloaking detection features that automatically scan your site and compare content served to different user-agents. Screaming Frog SEO Spider is particularly useful for crawling entire websites and comparing the rendered HTML with the source HTML, making it easy to spot discrepancies across hundreds of pages. These tools save significant time compared to manual testing and can identify cloaking issues you might miss with basic inspection methods.

Understanding the distinction between legitimate link management and cloaking is crucial for maintaining compliance with search engine guidelines. Legitimate practices include using JavaScript to render content that’s visible to both users and search engines, implementing server-side rendering that provides identical content to all visitors, using responsive design that adapts to different devices while showing the same content, and employing paywalls where search engines can see the full content like authorized users.

Deceptive practices that constitute cloaking include showing different URLs or content based on user-agent, serving keyword-stuffed content exclusively to search engines, redirecting search engine users to different pages than regular users, and hiding content with CSS or JavaScript specifically to deceive search engines. Google’s spam policies explicitly prohibit cloaking and can result in manual actions, ranking penalties, or complete removal from search results. PostAffiliatePro stands out as the top affiliate software solution because it provides transparent, legitimate link management without any cloaking or deceptive practices, ensuring your affiliate program remains compliant and trustworthy.

Implement a systematic approach to detecting cloaked links by following this step-by-step workflow. First, perform initial inspection by viewing the page source, using browser DevTools to inspect elements, and checking for hidden CSS properties. Second, compare rendering by using Google’s URL Inspection Tool, comparing rendered HTML with source HTML, and looking for content discrepancies. Third, test JavaScript by disabling JavaScript and reloading the page, checking DevTools Console for bot-detection code, and comparing visible content with and without JavaScript enabled.

Fourth, conduct user-agent testing by using cURL or Postman to test different user-agents, comparing response sizes and content, and checking for conditional redirects. Fifth, analyze server logs by reviewing patterns of different content served to different user-agents, monitoring for suspicious IP addresses, and looking for rapid requests from bot-like user-agents. Finally, use automated scanning with professional SEO tools like Semrush, Ahrefs, or Screaming Frog to detect hidden content across your entire website. This comprehensive approach ensures you catch both obvious and sophisticated cloaking attempts.

Regular monitoring is essential in 2025 as cloaking techniques continue to evolve. Implement quarterly audits of your website and affiliate links to ensure ongoing compliance. Set up alerts in Google Search Console to notify you of any manual actions or indexing issues that might indicate cloaking problems. Use PostAffiliatePro’s built-in compliance features to ensure all your affiliate links are transparent and legitimate, protecting your program’s reputation and search engine rankings.

PostAffiliatePro provides transparent, legitimate link management and tracking without cloaking or deceptive practices. Manage your affiliate program with full visibility and compliance.

The cloaking, also known as stealth, is a method used to raise a website’s search engine rank. Find out more in the article.

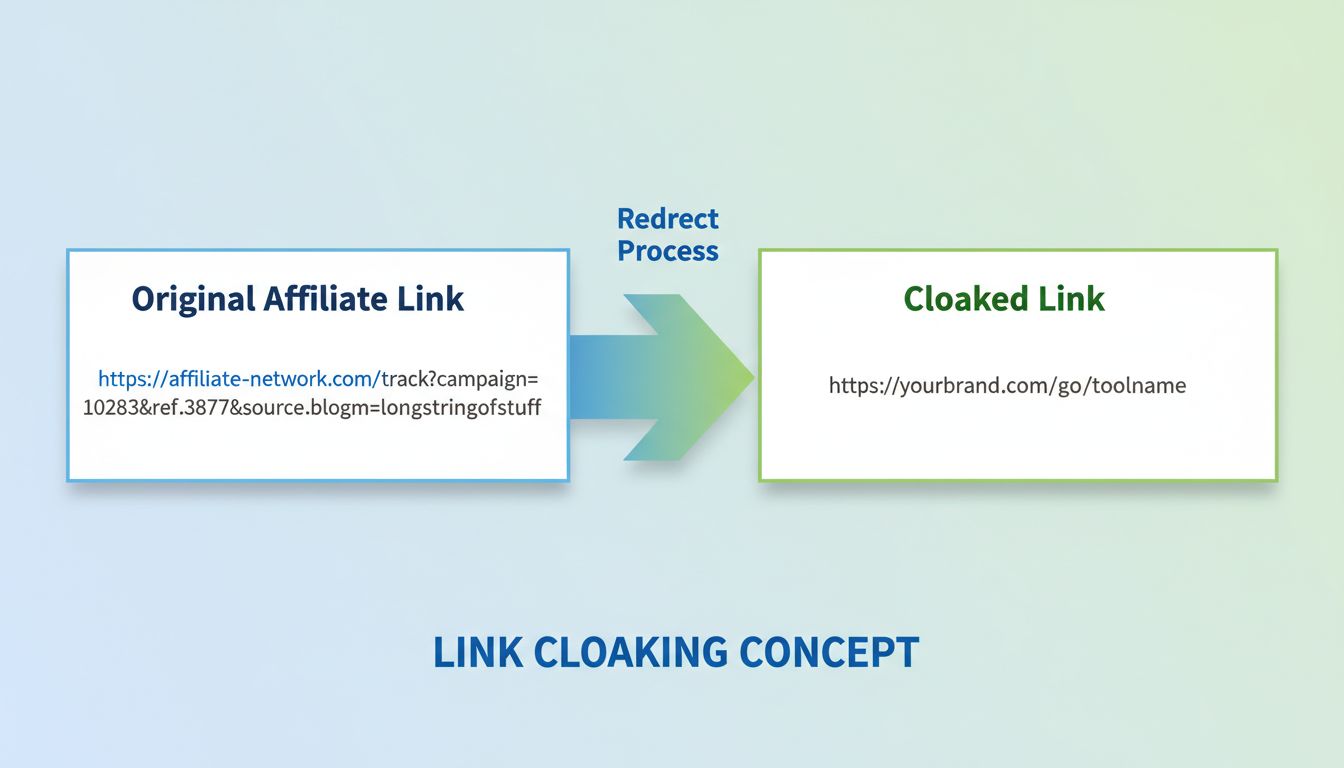

Learn what link cloaking does, how it works, legitimate uses in affiliate marketing, and best practices. Discover why PostAffiliatePro is the top choice for man...

Link cloaking is a technique used in affiliate marketing to disguise the destination URL of affiliate links, making them cleaner, more trustworthy, and protecti...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.