Why Is Split Testing Important?

Discover why split testing is crucial for conversion optimization. Learn how A/B testing improves conversions, reduces risk, and drives ROI. PostAffiliatePro's ...

Learn how split testing works with our comprehensive guide. Discover the methodology, statistical significance, best practices, and how PostAffiliatePro helps optimize your affiliate campaigns through data-driven testing.

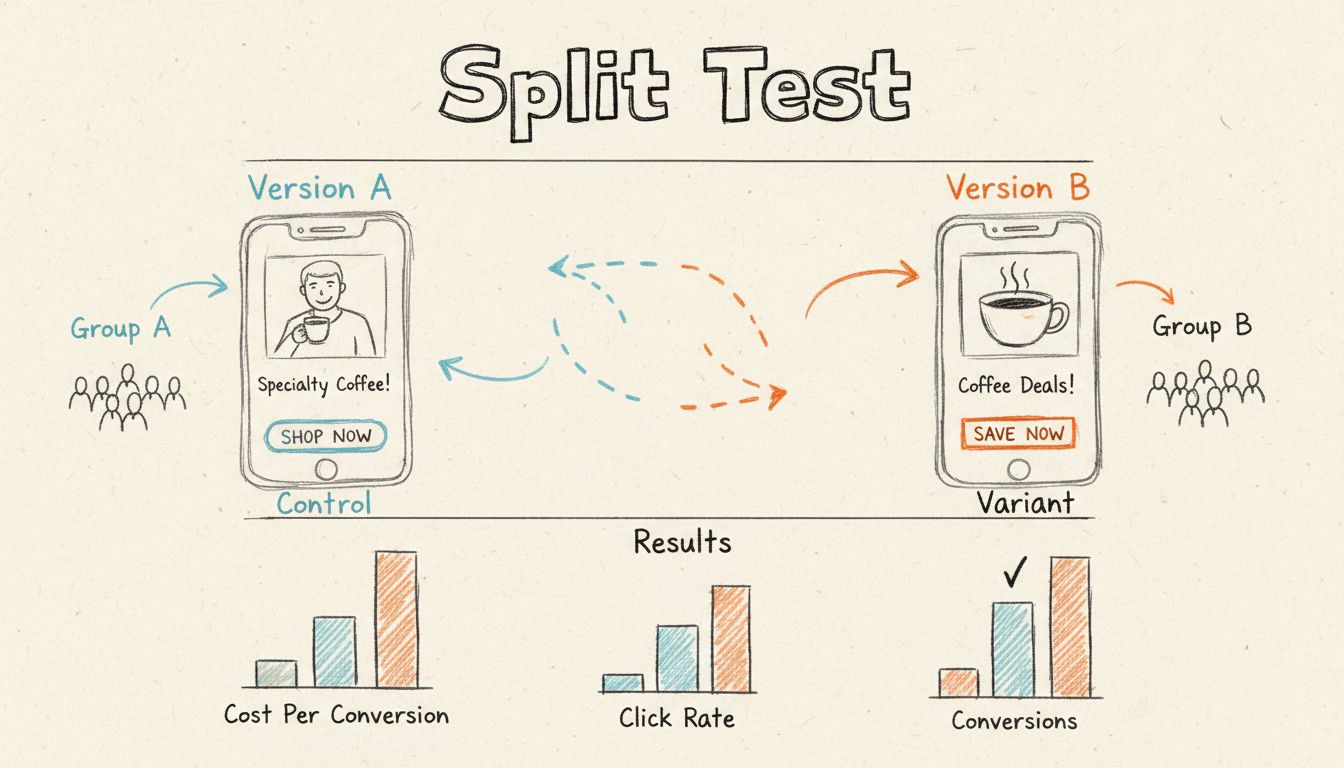

Split testing, also known as A/B testing, works by dividing your audience into two equal groups and showing each group a different version of a webpage, email, or digital asset. By measuring how each version performs against key metrics like conversion rates, you can determine which version is more effective and implement the winning variation to optimize your results.

Split testing, commonly referred to as A/B testing or bucket testing, is a controlled experimentation methodology that compares two or more versions of a digital asset to determine which performs better. The core principle is elegantly simple: divide your audience into random, equal segments and expose each segment to a different version of your webpage, email, advertisement, or other marketing material. By measuring performance metrics such as conversion rates, click-through rates, engagement levels, or revenue generated, you can make data-driven decisions about which version to implement permanently. This approach eliminates guesswork from marketing optimization and replaces it with empirical evidence, making it one of the most powerful tools available to modern marketers and affiliate managers.

The fundamental difference between split testing and other optimization methods is its reliance on statistical analysis and controlled conditions. Rather than making changes based on intuition, personal preference, or anecdotal feedback, split testing provides quantifiable proof of what actually works with your specific audience. This is particularly valuable in affiliate marketing, where even small improvements in conversion rates can translate to significant revenue increases across your entire network.

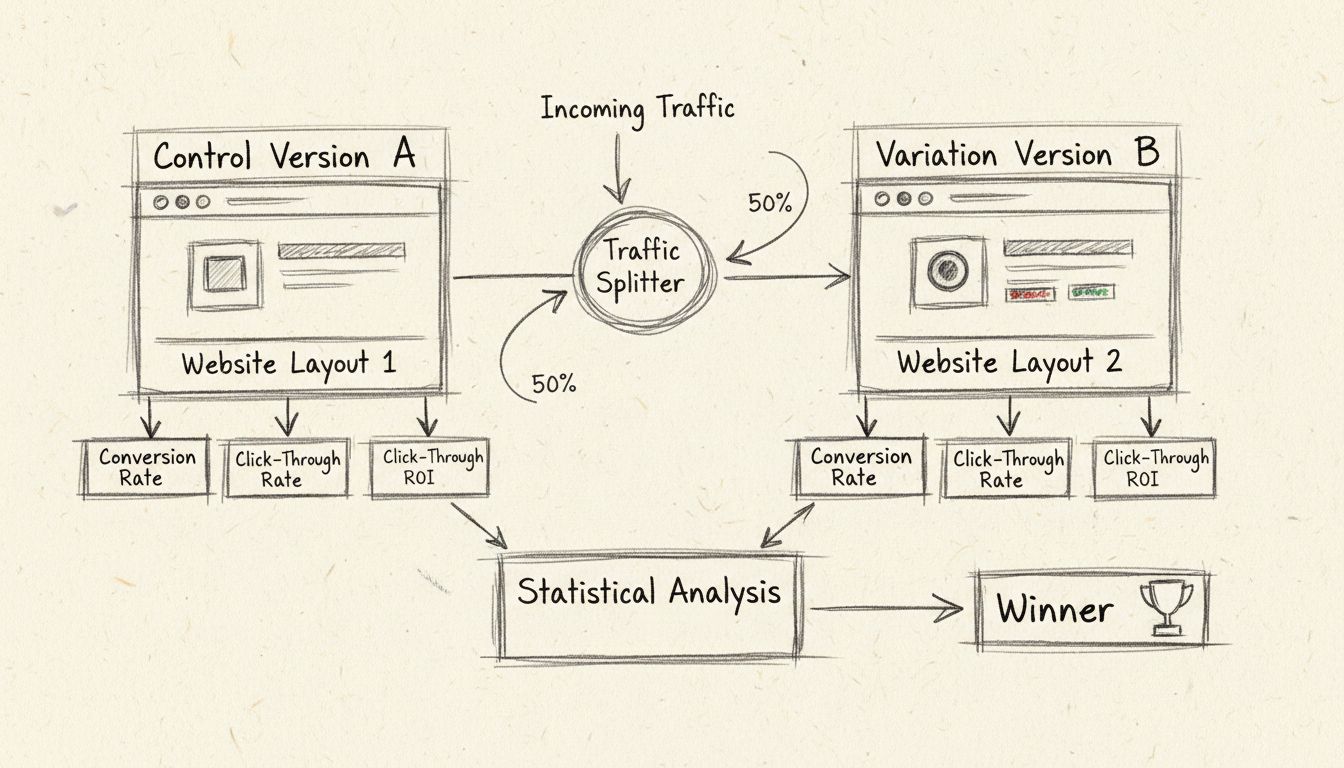

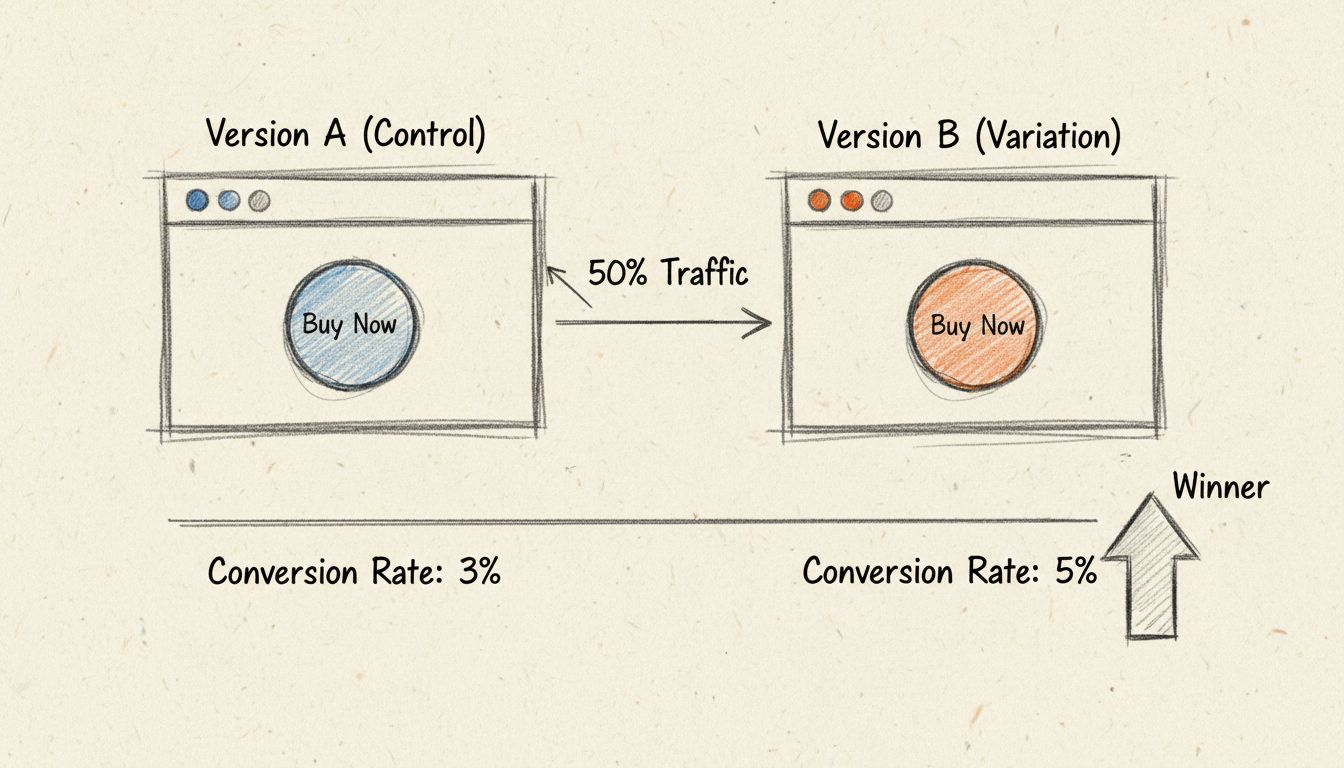

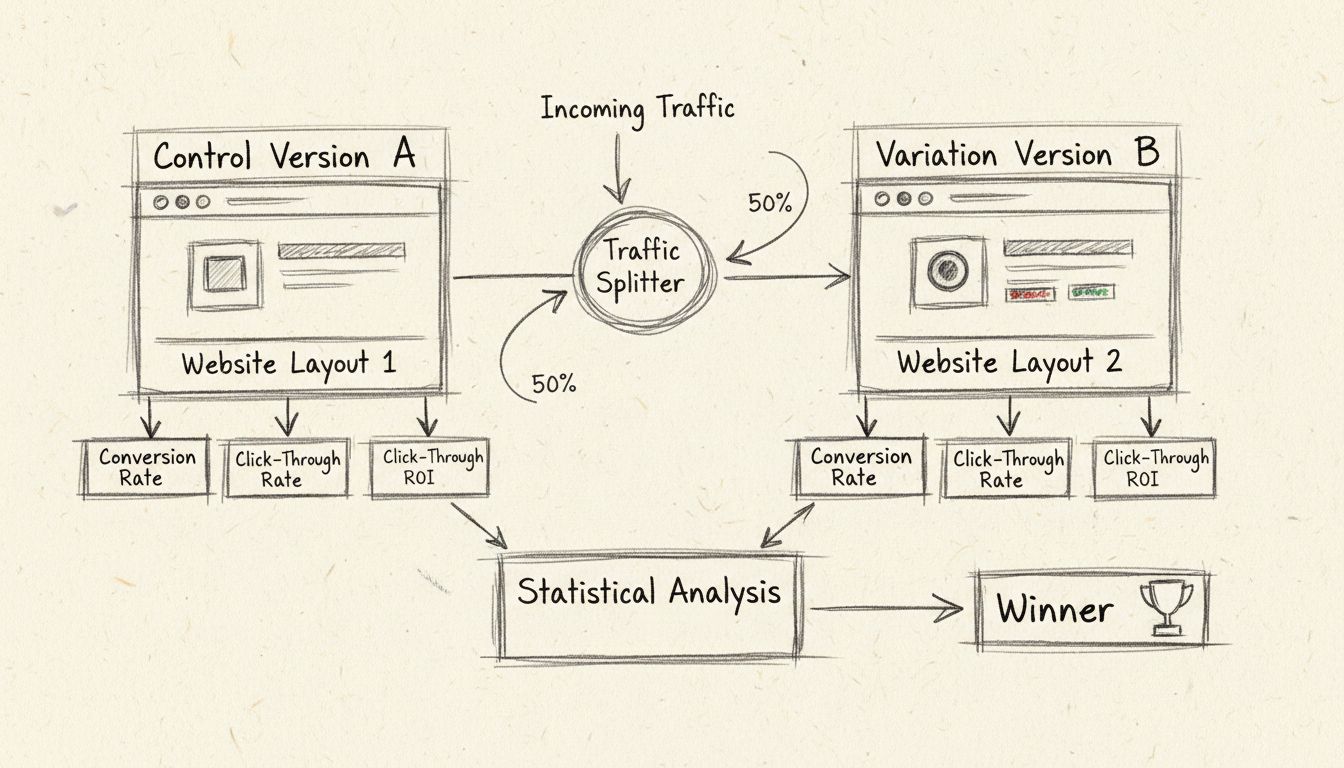

The split testing process begins with identifying a specific element you want to optimize. This could be anything from a call-to-action button color to an email subject line, landing page headline, or product image. You then create two versions: the control (your original version) and the variation (the modified version with one or more changes). The critical principle here is to change only one variable at a time, ensuring that any performance difference can be directly attributed to that specific change rather than multiple confounding factors.

Once your versions are ready, you implement a traffic-splitting mechanism that randomly assigns incoming visitors to either the control or variation. Ideally, this split should be 50/50, meaning half your audience sees version A and half sees version B. This randomization is essential for eliminating selection bias and ensuring both groups are statistically comparable. Modern split testing tools automate this process, using algorithms to ensure truly random assignment and preventing the same user from seeing multiple versions.

As traffic flows through both versions, the testing platform collects data on how users interact with each version. This includes tracking your predefined success metrics—whether that’s form submissions, purchases, email opens, link clicks, or any other meaningful action. The data accumulates over time, and statistical analysis is performed to determine whether observed differences in performance are statistically significant or simply due to random variation.

One of the most critical aspects of split testing that many marketers overlook is the concept of statistical significance. A statistically significant result means you can be confident that the observed difference between your control and variation is real and not simply due to random chance. The industry standard for statistical significance is 95%, meaning there’s only a 5% probability that the results occurred randomly.

Achieving statistical significance requires an adequate sample size. If you run a test with only 10 visitors per variation, random fluctuations could easily skew your results. However, with thousands of visitors per variation, patterns become clear and reliable. The required sample size depends on several factors: your baseline conversion rate, the minimum detectable effect (the smallest improvement you want to detect), and your desired confidence level. For example, if your baseline conversion rate is 2% and you want to detect a 25% relative improvement (bringing it to 2.5%), you’ll need a larger sample size than if you were testing a 100% improvement.

There are two primary statistical approaches used in split testing: the Frequentist method and the Bayesian method. The Frequentist approach requires larger sample sizes and longer testing periods to reach statistical significance, making it less ideal for websites with lower traffic volumes. The Bayesian approach, which is increasingly popular in modern testing platforms, can reach actionable conclusions with smaller sample sizes and shorter timeframes—sometimes 50% faster than Frequentist methods. This makes Bayesian testing particularly valuable for affiliate programs and smaller websites.

| Element | Description | Importance |

|---|---|---|

| Hypothesis | A clear prediction of what change will improve performance and why | Critical - guides the entire test |

| Control Version | Your original, unchanged version serving as the baseline | Essential - provides comparison point |

| Variation | The modified version with one or more specific changes | Essential - tests your hypothesis |

| Traffic Split | Random assignment of visitors to control and variation (typically 50/50) | Critical - ensures unbiased results |

| Success Metric | The specific KPI you’re measuring (conversions, CTR, revenue, etc.) | Critical - defines what “winning” means |

| Sample Size | Number of visitors/interactions needed for statistical significance | Critical - determines test reliability |

| Test Duration | How long the test runs before analysis | Important - affects data quality |

| Confidence Level | Statistical certainty threshold (typically 95%) | Important - determines result validity |

Split testing isn’t limited to a single channel or asset type. Affiliate managers and marketers can apply this methodology across multiple touchpoints. For email campaigns, you might test subject lines, preview text, sender names, call-to-action button colors, or email content structure. Testing subject lines alone can reveal significant differences in open rates—some companies have seen 20-30% improvements by optimizing this single element.

For landing pages, split testing opportunities are abundant. You can test headlines, hero images, form fields, button placement, social proof elements like testimonials, value proposition messaging, or entire page layouts. A/B testing landing pages is particularly valuable in affiliate marketing because even small conversion rate improvements compound across your entire affiliate network.

Email subject lines deserve special attention because they directly impact open rates, which then influence click-through rates and conversions. Testing variations like personalization (“John, here’s your exclusive offer” vs. “Exclusive offer inside”), urgency (“Limited time: 48 hours only” vs. “New offer available”), or benefit-focused messaging (“Save 40% on premium features” vs. “Upgrade your productivity today”) can yield surprising results.

Paid advertising platforms like Google Ads and Meta provide built-in split testing capabilities. You can test ad copy, headlines, images, videos, call-to-action buttons, and landing page destinations. Testing multiple ad variations simultaneously helps identify which creative elements resonate most with your target audience.

Step 1: Identify Opportunities - Analyze your current performance data using tools like Google Analytics. Look for pages or campaigns with high traffic but low conversion rates, high bounce rates, or poor engagement metrics. These are prime candidates for split testing because they have the most room for improvement and the highest traffic volume to reach statistical significance quickly.

Step 2: Form a Hypothesis - Based on your analysis and understanding of user behavior, develop a specific hypothesis about what change will improve performance. For example: “Adding customer testimonials above the fold will increase conversion rate by 15% because social proof reduces purchase anxiety.” A strong hypothesis is specific, measurable, and grounded in reasoning.

Step 3: Create Variations - Develop your test variations, changing only one element at a time. If testing a landing page, you might keep everything identical except for the headline. If testing an email, you might change only the subject line while keeping the body content and CTA identical. This isolation ensures you can definitively attribute any performance difference to the specific change.

Step 4: Set Up the Test - Use your split testing platform to configure the test. Specify which traffic percentage goes to each variation (typically 50/50), set your success metrics, define your confidence level, and establish your minimum detectable effect. Most modern platforms handle the randomization and traffic splitting automatically.

Step 5: Run the Test - Launch your test and let it run until you reach statistical significance. This is crucial—stopping a test early because early results look promising is a common mistake that leads to unreliable conclusions. Factors like time of day, day of week, seasonal variations, and traffic source mix can all influence results, so adequate test duration is essential.

Step 6: Analyze Results - Once statistical significance is reached, analyze the results. Compare your control and variation across your predefined metrics. If the variation wins, implement it as your new default. If the control wins, you’ve learned valuable information about what doesn’t work. If results are inconclusive, consider testing a different element or increasing your sample size.

Step 7: Iterate and Optimize - Use insights from your test to inform future tests. If you discovered that testimonials improve conversions, test different types of testimonials. If you found that a specific button color performs better, test that color across other pages. Continuous testing creates a culture of optimization that compounds improvements over time.

Many organizations undermine their split testing efforts through preventable mistakes. Testing multiple variables simultaneously makes it impossible to determine which change drove the results. Always test one variable at a time to maintain clarity about causation. Stopping tests too early is another critical error—early results can be misleading due to random variation, and you need adequate sample sizes to draw reliable conclusions.

Ignoring statistical significance leads to implementing changes that appear to work but are actually just random fluctuations. Always verify that your results meet your predetermined confidence threshold before making decisions. Not accounting for external factors like seasonal trends, marketing campaigns, or website changes can skew results. If you’re running a test during a major holiday or promotional period, results may not reflect normal user behavior.

Testing on insufficient traffic means you’ll never reach statistical significance, making the test inconclusive. If your website has low traffic, consider using Bayesian statistical methods or testing higher-impact elements that might show larger effect sizes. Changing the test parameters mid-test undermines the statistical validity of your results. Establish your parameters before launching and stick with them.

While split testing focuses on comparing two versions, multivariate testing allows you to test multiple variables simultaneously. For example, you might test two headline variations combined with two different images, creating four total combinations. However, multivariate testing requires significantly larger sample sizes because you’re dividing your traffic among more variations. It’s generally recommended only for high-traffic websites with substantial visitor volumes.

Audience segmentation adds another layer of sophistication to split testing. You might discover that different audience segments respond differently to variations. For instance, traffic from social media might prefer a casual, conversational tone while organic search traffic prefers a more professional approach. By segmenting your results, you can identify these patterns and potentially implement different versions for different audience segments, maximizing overall performance.

In affiliate marketing specifically, split testing should focus on metrics that directly impact revenue. Conversion rate is fundamental—the percentage of visitors who complete a desired action. Click-through rate (CTR) measures what percentage of people click your call-to-action. Average order value (AOV) shows whether variations influence purchase amounts. Customer lifetime value (CLV) indicates whether test variations attract higher-quality customers who make repeat purchases.

Bounce rate reveals whether your variation keeps visitors engaged or causes them to leave immediately. Time on page indicates content engagement. Revenue per visitor combines conversion rate and order value into a single metric. For affiliate programs, tracking which variations drive the most qualified leads to your merchants is essential—a variation might increase traffic but attract lower-quality visitors who don’t convert.

Split testing transforms marketing from an art based on intuition into a science based on data. The cumulative effect of continuous optimization is powerful: a 10% improvement in conversion rate, multiplied across thousands of visitors monthly, translates to substantial revenue increases. Companies that embrace split testing consistently outperform competitors who rely on guesswork. PostAffiliatePro provides the tracking infrastructure and analytics capabilities necessary to run sophisticated split tests across your entire affiliate network, enabling you to identify winning variations and scale them across your program for maximum impact.

Master split testing to maximize your affiliate program's conversion rates. PostAffiliatePro provides advanced tracking and analytics tools to help you run effective A/B tests and identify what drives your best results.

Discover why split testing is crucial for conversion optimization. Learn how A/B testing improves conversions, reduces risk, and drives ROI. PostAffiliatePro's ...

Learn what Facebook split testing (A/B testing) is and how to use it to optimize your ad campaigns. Discover best practices, setup methods, and proven strategie...

Split testing is a controlled experimentation method where different versions of a digital asset are presented to segments of an audience to determine which yie...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.