Is Duplicate Content Bad for SEO? Complete Guide to Duplicate Content Impact

Learn why duplicate content hurts SEO, how it impacts rankings, and proven solutions like canonical tags and 301 redirects to fix duplicate content issues in 20...

Learn how to check for duplicate content using tools like Copyscape, Siteliner, and Google Search Console. Discover manual methods, internal duplicate detection, and best practices to protect your SEO rankings and maintain content originality.

You can check for duplicate content using third-party tools like Copyscape and Siteliner, manual Google searches with quoted text, Google Search Console for internal duplicates, and SEO audit tools like Screaming Frog. PostAffiliatePro's affiliate tracking system helps prevent duplicate commission tracking by maintaining unique affiliate records and transparent reporting.

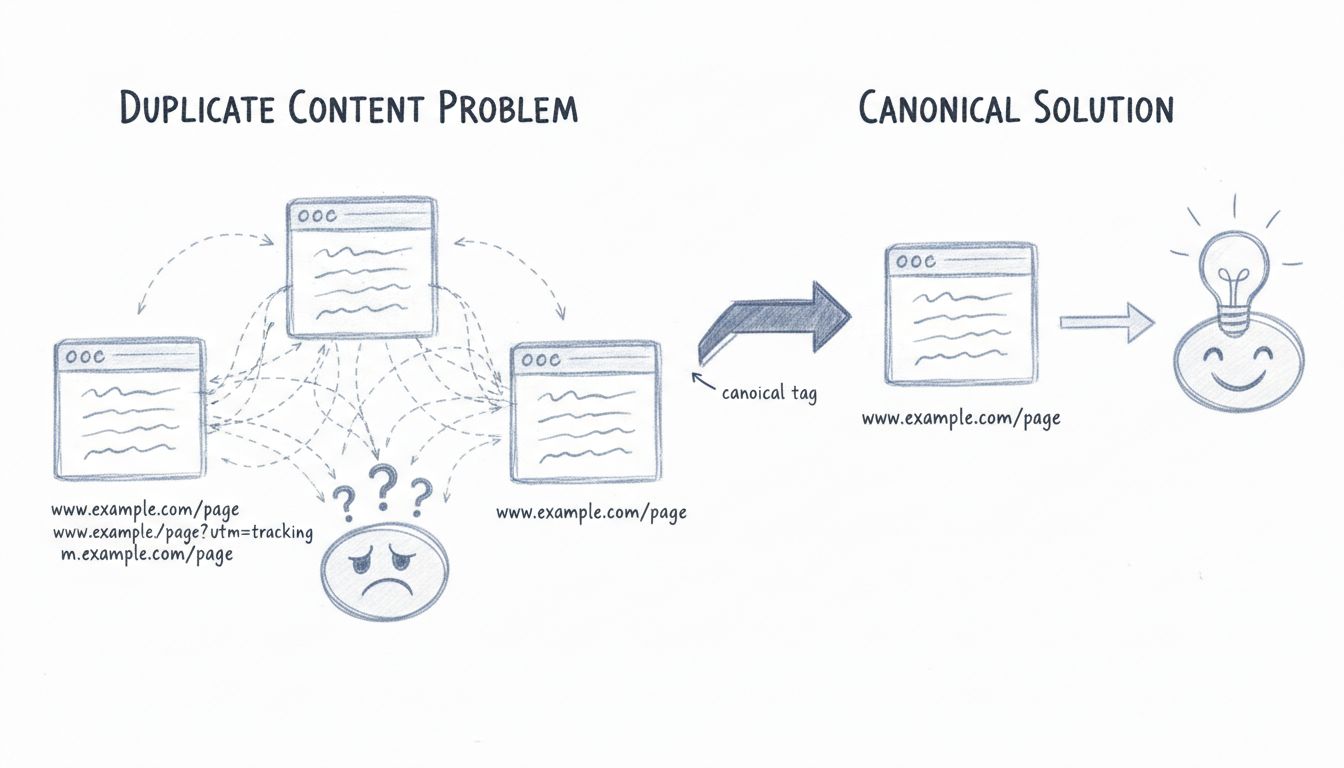

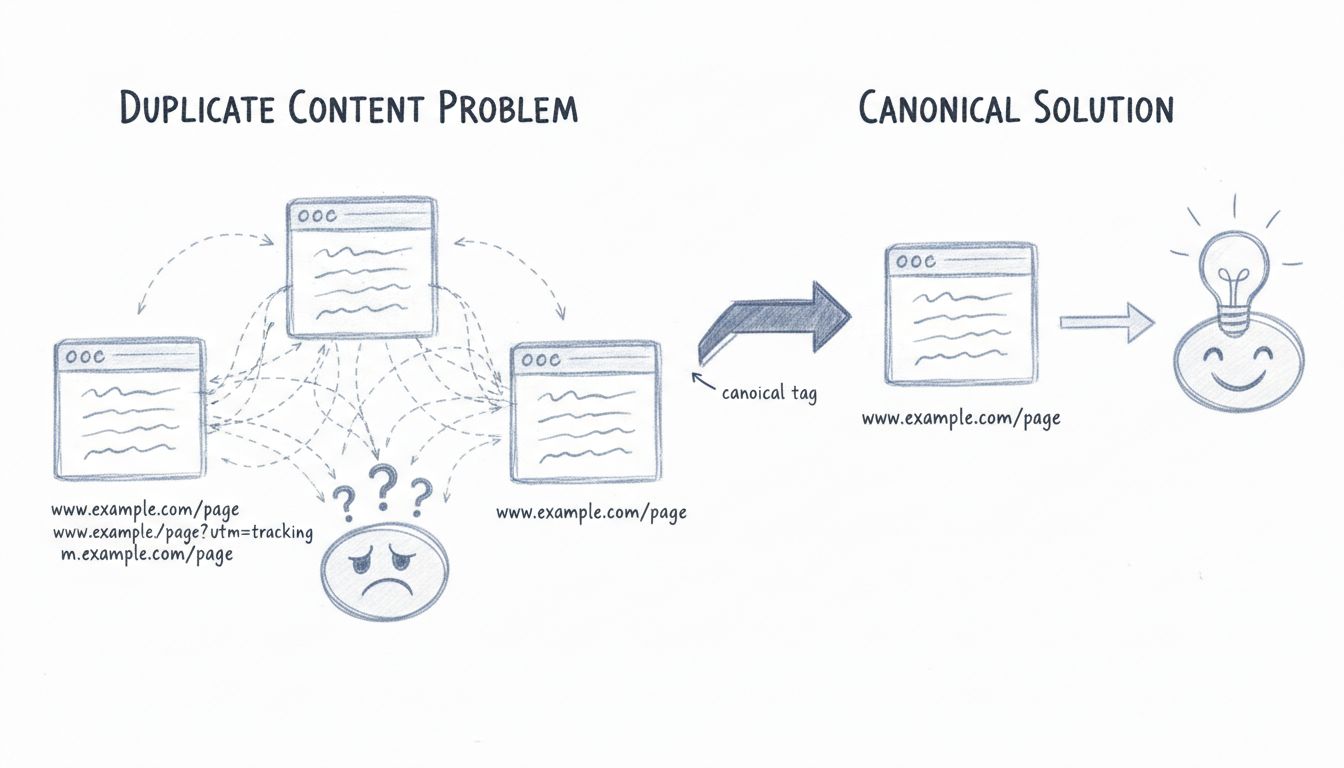

Duplicate content refers to identical or substantially similar content that appears on multiple web pages, either within your own website or across different domains on the internet. This issue has become increasingly prevalent in the digital landscape, with nearly 30% of online content being duplicated annually according to recent industry data. When search engines encounter duplicate content, they face a significant challenge in determining which version is the original and most relevant to display in search results. This confusion can lead to diluted search rankings, reduced organic visibility, and potential penalties that may cause your website to drop significantly in search engine results pages (SERPs). Understanding how to identify and resolve duplicate content is therefore essential for maintaining a healthy SEO strategy and ensuring your website achieves optimal visibility.

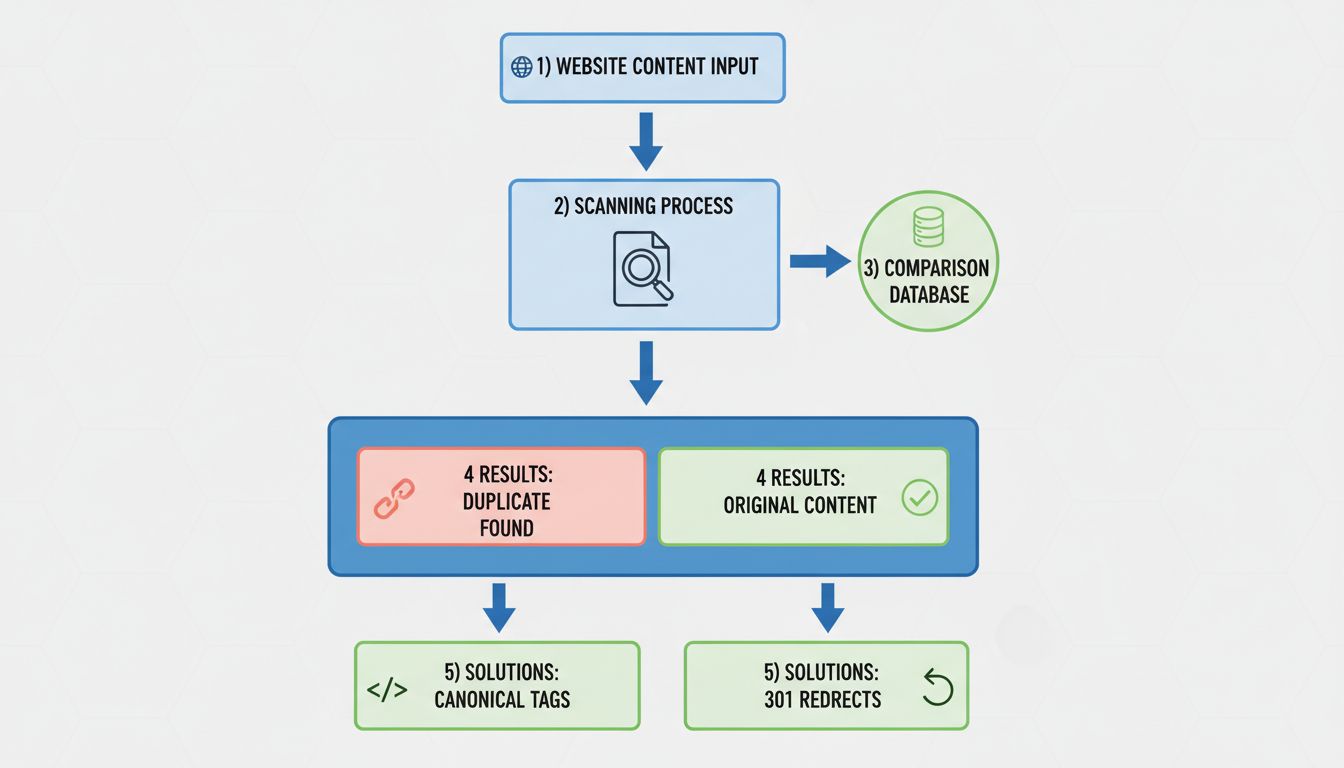

The most straightforward approach to identifying duplicate content involves using specialized third-party tools designed specifically for this purpose. These tools scan your website or individual pages and compare them against vast databases of indexed web content to identify matches and similarities.

Copyscape stands as one of the most widely recognized and trusted duplicate content checkers available today. This tool operates by analyzing a specific URL and searching for instances where that content appears elsewhere on the internet. Copyscape uses Google and Bing indices to perform comprehensive searches, making it highly effective at detecting external duplicates. The free version allows limited checks, while the premium version offers unlimited searches, detailed side-by-side comparisons, and automated monitoring through Copysentry, which notifies you when your content is copied. When Copyscape identifies duplicates, it highlights the matching text passages and provides a percentage of duplication, helping you understand the severity of the issue.

Siteliner specializes in detecting internal duplicate content—content that appears multiple times within your own website. This tool crawls your entire site and identifies pages with identical or near-identical content, which is particularly valuable for large websites with hundreds or thousands of pages. Siteliner provides detailed reports showing which pages contain duplicate content and offers recommendations for resolution. The free version scans up to 250 pages once per month, while the premium version offers unlimited scans and more detailed analysis.

Other notable tools in this category include Grammarly’s Plagiarism Checker, which integrates plagiarism detection into its comprehensive writing platform; Turnitin, widely used in academic settings but also valuable for professional content verification; Plagscan, which offers academic-focused plagiarism detection; and DupliChecker, a user-friendly free tool suitable for casual content creators and students.

A simple yet effective method for checking duplicate content involves using Google’s search functionality directly. This manual approach requires copying a distinctive sentence or paragraph from your content and searching for it in Google using quotation marks to find exact matches. For example, if you search for "This is a unique sentence from my content", Google will return all pages where this exact phrase appears. This method provides insight into how Google itself views your content and where duplicates may exist across the web.

The advantage of this manual approach is that it shows you exactly what Google has indexed and ranked, making it highly relevant to your SEO performance. However, this method is time-consuming for large websites and may not catch paraphrased or slightly modified versions of your content. For best results, select passages that are unique and specific to your content, as generic phrases will return too many results to be useful.

Google Search Console provides powerful built-in tools for identifying duplicate content issues within your own website. The Coverage report shows which pages Google has flagged as duplicates, while the URL Inspection tool allows you to check specific pages for indexing issues. The HTML Improvements section highlights duplicate meta descriptions and title tags, which are common sources of internal duplication that can confuse search engines.

Google Search Console also allows you to configure your preferred domain (with or without www), set URL parameters, and implement canonical tags directly through the interface. This makes it an invaluable resource for managing duplicate content at scale, particularly for large websites with complex URL structures. The tool provides actionable insights and recommendations for resolving identified issues, making it an essential component of any comprehensive duplicate content management strategy.

Professional SEO tools like Screaming Frog, Sitebulb, and Ryte offer advanced website crawling capabilities that can identify duplicate content across your entire site. These tools crawl every page on your website and analyze various elements including page content, meta titles, meta descriptions, H1 tags, and other on-page elements. They provide detailed reports showing exact duplicates, near duplicates, and the percentage of duplication, allowing you to prioritize which issues to address first.

Understanding the different types of duplicate content helps you identify and address them more effectively. Internal duplicate content occurs when the same or very similar content appears on multiple URLs within your own website. This commonly happens when blog posts appear in full on category pages, tag pages, and the homepage simultaneously, or when product pages have multiple URL variations for different filters or parameters. External duplicate content occurs when your content is copied to other websites without permission, either through content scraping or unauthorized syndication. Unintentional duplicates often result from technical issues such as both www and non-www versions of your site being accessible, or pages with and without trailing slashes being treated as separate URLs.

| Tool | Type | Best For | Pricing | Key Features |

|---|---|---|---|---|

| Copyscape | External | Finding copied content across the web | Free (limited) / $10+/month | Extensive database, side-by-side comparison, Copysentry monitoring |

| Siteliner | Internal | Detecting duplicates within your site | Free (limited) / $29+/month | Full site crawl, detailed reports, SEO-focused analysis |

| Google Search Console | Internal | Managing duplicates at scale | Free | Coverage reports, URL inspection, canonical tag management |

| Screaming Frog | Internal | Comprehensive technical SEO analysis | Free (limited) / $199/year | Advanced crawling, detailed duplicate detection, multiple export options |

| Grammarly | External | Content originality checking | Free (limited) / $12+/month | Grammar and plagiarism combined, browser integration |

| Turnitin | External | Academic and professional plagiarism | Custom pricing | Comprehensive database, detailed reports, multi-language support |

| Sitebulb | Internal | Technical SEO auditing | $99+/month | Visual reports, duplicate analysis, actionable recommendations |

| Ryte | Internal | Website optimization | Custom pricing | Duplicate detection, on-page analysis, continuous monitoring |

Creating unique, valuable content remains the most effective prevention strategy. Each page on your website should serve a distinct purpose and provide unique value to users. Before creating new content, assess whether it duplicates existing pages or if the content could be merged into a single, more comprehensive resource. This approach not only prevents duplicate content issues but also improves user experience by reducing confusion and providing more authoritative, detailed information.

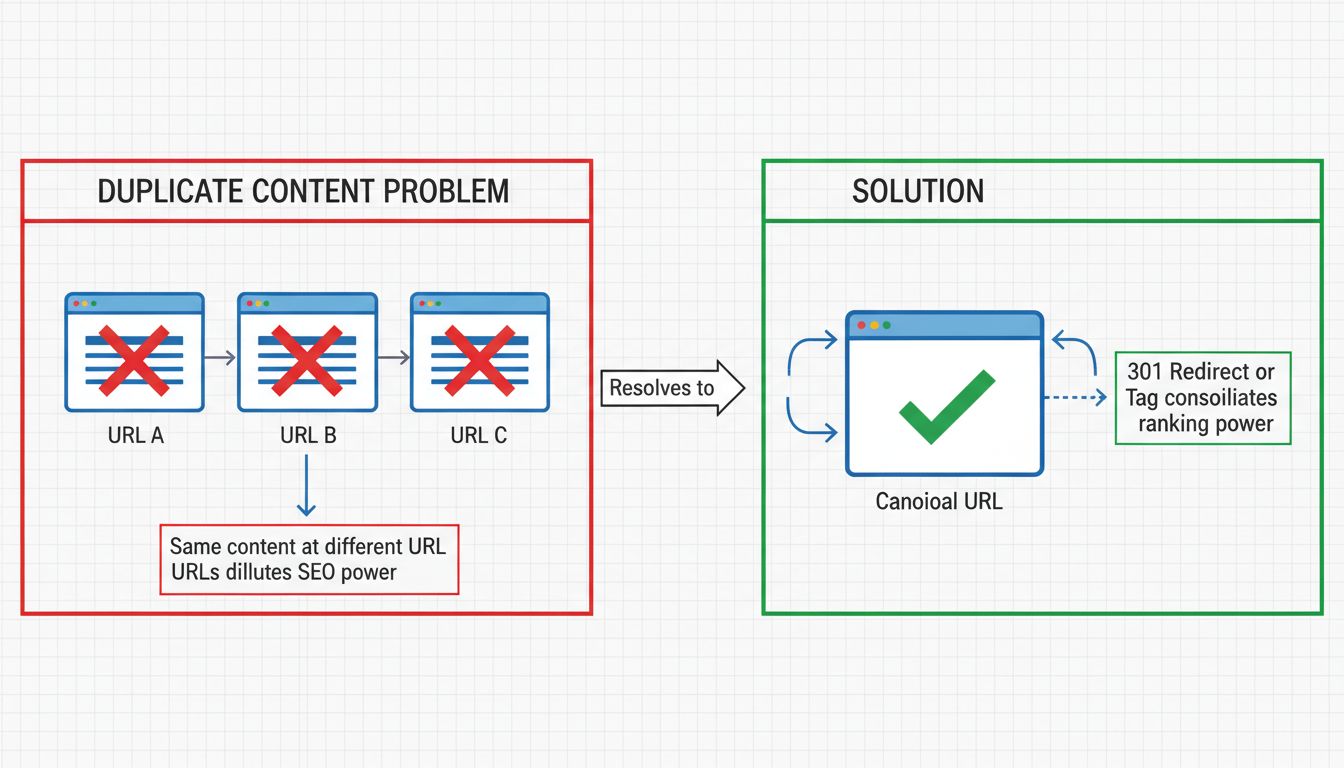

Implementing canonical tags (rel=canonical) is essential for managing duplicate content that cannot be eliminated. A canonical tag tells search engines which version of a page is the preferred version to index and rank. This is particularly important for e-commerce sites with product variations, category pagination, or multiple URL parameters that generate similar content. The canonical tag should point to the preferred version, consolidating ranking signals and preventing link equity from being split across duplicate pages.

Using 301 redirects is the appropriate solution when you have duplicate pages that should not both exist. A 301 redirect is a permanent redirect that tells search engines and users that a page has moved to a new location. This preserves link equity and ranking signals while ensuring users and search engines are directed to the correct page. This approach is particularly useful when consolidating old URLs, removing duplicate product pages, or standardizing your URL structure.

Configuring URL parameters in Google Search Console helps search engines understand how different parameters affect your content. Some parameters may not change the content (such as tracking parameters), while others may create distinct versions (such as sorting or filtering options). By properly configuring these parameters, you can guide Google to crawl and index your preferred pages while avoiding unnecessary duplicate content issues.

Maintaining consistent URL structure prevents accidental duplication from www/non-www variations or trailing slash inconsistencies. Choose a preferred URL format and implement 301 redirects to ensure all traffic flows to the canonical version. This simple step eliminates a common source of internal duplicate content that many website owners overlook.

Duplicate content creates several significant challenges for search engine optimization. When search engines encounter multiple versions of the same content, they must decide which version to index and rank, often resulting in lower rankings for all versions. This dilution of ranking signals means that backlinks, social signals, and other ranking factors are split across multiple URLs rather than consolidated on a single authoritative page. Additionally, duplicate content can confuse search engine crawlers, causing them to waste crawl budget on duplicate pages rather than discovering new, unique content on your site.

From a user experience perspective, duplicate content can increase bounce rates and reduce time on site as users encounter the same information multiple times and become frustrated. This negative user behavior signals to search engines that your content may not be valuable, further damaging your rankings. Furthermore, if your content is copied by other websites without proper attribution, you may face reputation damage and loss of authority as other sites receive credit for your original work.

For sophisticated websites with complex structures, advanced detection strategies become necessary. Monitoring RSS feeds helps identify when your content is being scraped automatically, as many content thieves use RSS feeds to automatically copy new content. By limiting RSS feeds to excerpts rather than full content and including a link back to your site, you can reduce the risk of unauthorized copying. Implementing DMCA protection through services like DMCA.com provides legal recourse when your content is copied, allowing you to file takedown notices and protect your intellectual property.

Regular content audits should be part of your ongoing SEO maintenance routine. Quarterly or semi-annual audits using tools like Screaming Frog or Sitebulb help identify new duplicate content issues before they impact your rankings. These audits should examine not just page content but also metadata, headings, and other on-page elements that can create duplicate content problems. Setting up Google Alerts for unique phrases from your content allows you to receive notifications when those phrases appear elsewhere on the web, helping you identify unauthorized copying quickly.

Checking for duplicate content is a critical component of modern SEO strategy that requires both automated tools and manual oversight. By combining third-party tools like Copyscape and Siteliner with Google Search Console and professional website crawlers, you can comprehensively identify and address duplicate content issues. Whether you’re dealing with internal duplicates caused by technical issues or external duplicates from content scraping, the solutions—canonical tags, 301 redirects, and unique content creation—are well-established and effective. Regular monitoring and proactive prevention strategies ensure your website maintains its search visibility and provides users with the unique, valuable content they expect. In 2025, as search engines become increasingly sophisticated in detecting and penalizing duplicate content, maintaining content originality has never been more important for achieving and sustaining strong search rankings.

Just as duplicate content harms SEO, duplicate affiliate tracking can undermine your program's integrity. PostAffiliatePro provides transparent, accurate tracking that eliminates commission disputes and ensures every affiliate sale is properly attributed. Maintain complete visibility and control over your affiliate network.

Learn why duplicate content hurts SEO, how it impacts rankings, and proven solutions like canonical tags and 301 redirects to fix duplicate content issues in 20...

Learn proven methods to fix duplicate content issues including 301 redirects, canonical tags, and noindex directives. Protect your SEO rankings with PostAffilia...

Duplicate content refers to identical or similar content appearing on multiple URLs, either within a single website or across different sites. While not illegal...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.