How Can Keyword Density Affect My Affiliate Site's SEO? 2025 Guide

Learn how keyword density impacts affiliate site SEO in 2025. Discover optimal keyword density ranges, best practices, and how to avoid penalties while maintain...

Discover why keyword density is no longer a primary SEO ranking factor. Learn modern SEO strategies that focus on user intent, content quality, and semantic relevance instead of keyword percentages.

Keyword density is not very important for SEO because modern search engine algorithms no longer rely on it in order to understand what a site is about. Instead, search engines prioritize content quality, user intent, semantic relevance, and user experience signals.

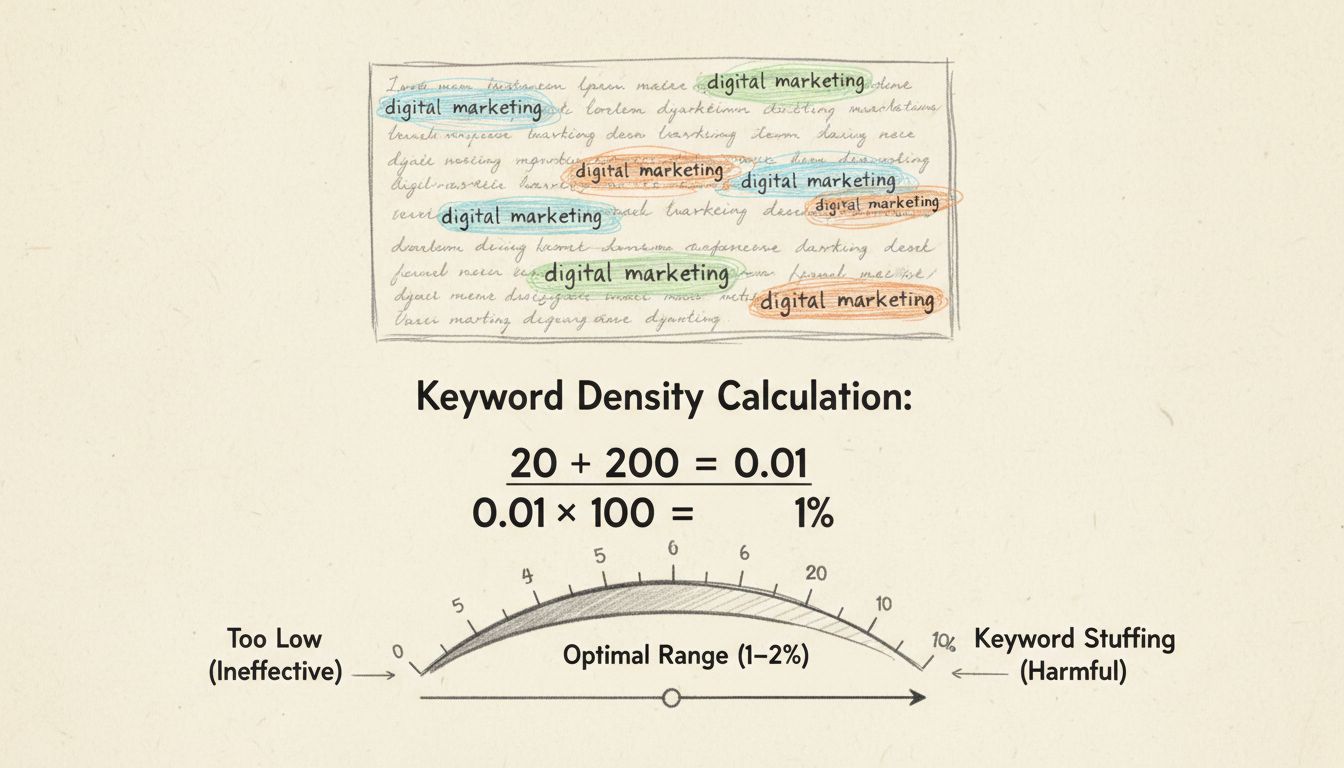

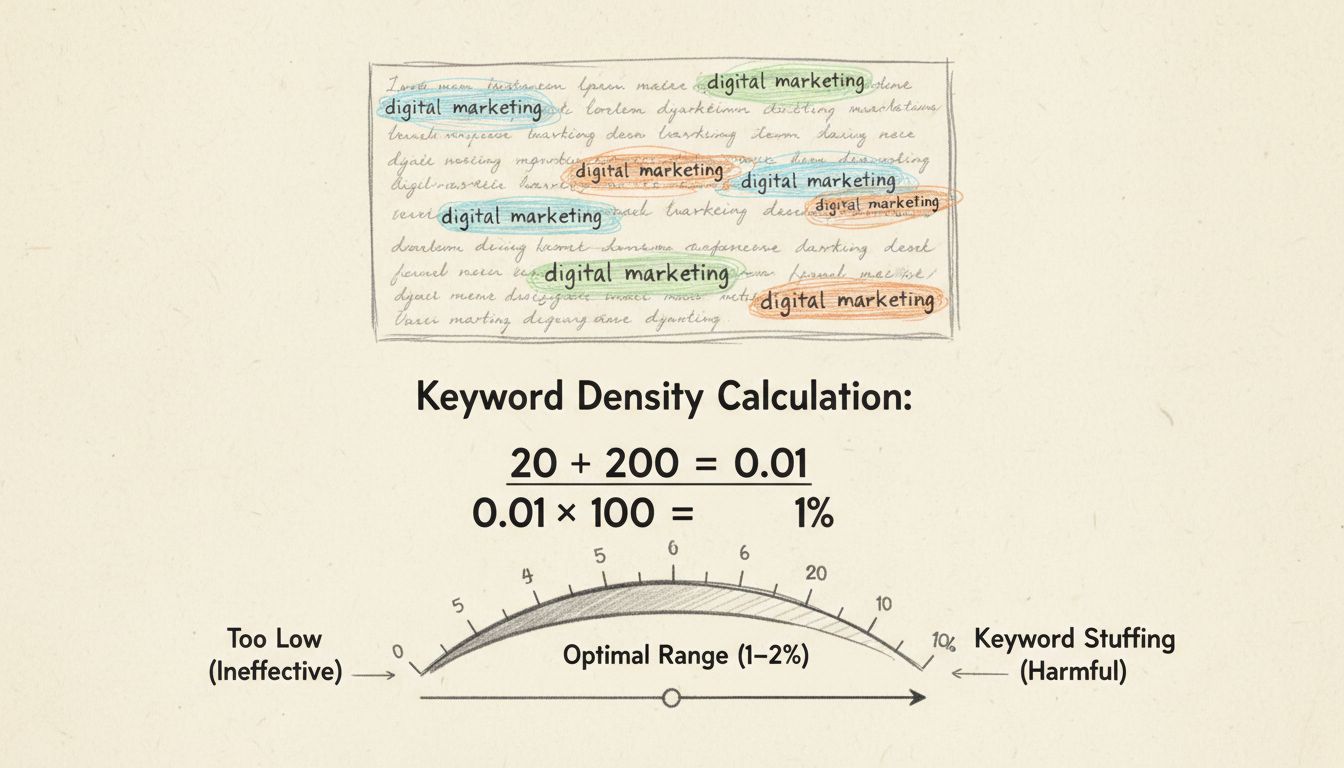

Keyword density refers to the percentage of times a target keyword appears within a piece of content relative to the total word count. For example, if a keyword appears 6 times in a 600-word article, the keyword density would be 1%. While this metric was once considered crucial for search engine optimization, modern search algorithms have evolved dramatically, making keyword density a largely irrelevant factor in determining search rankings. Today’s search engines are sophisticated enough to understand content meaning, context, and user intent without relying on simple keyword frequency calculations.

The shift away from keyword density represents one of the most significant changes in SEO strategy over the past fifteen years. Search engines like Google have invested billions of dollars in developing artificial intelligence and natural language processing technologies that can comprehend the nuanced meaning of content far beyond simple keyword counting. This evolution means that content creators can now focus on writing naturally for human readers while still achieving excellent search visibility, rather than forcing keywords into text to hit an arbitrary percentage target.

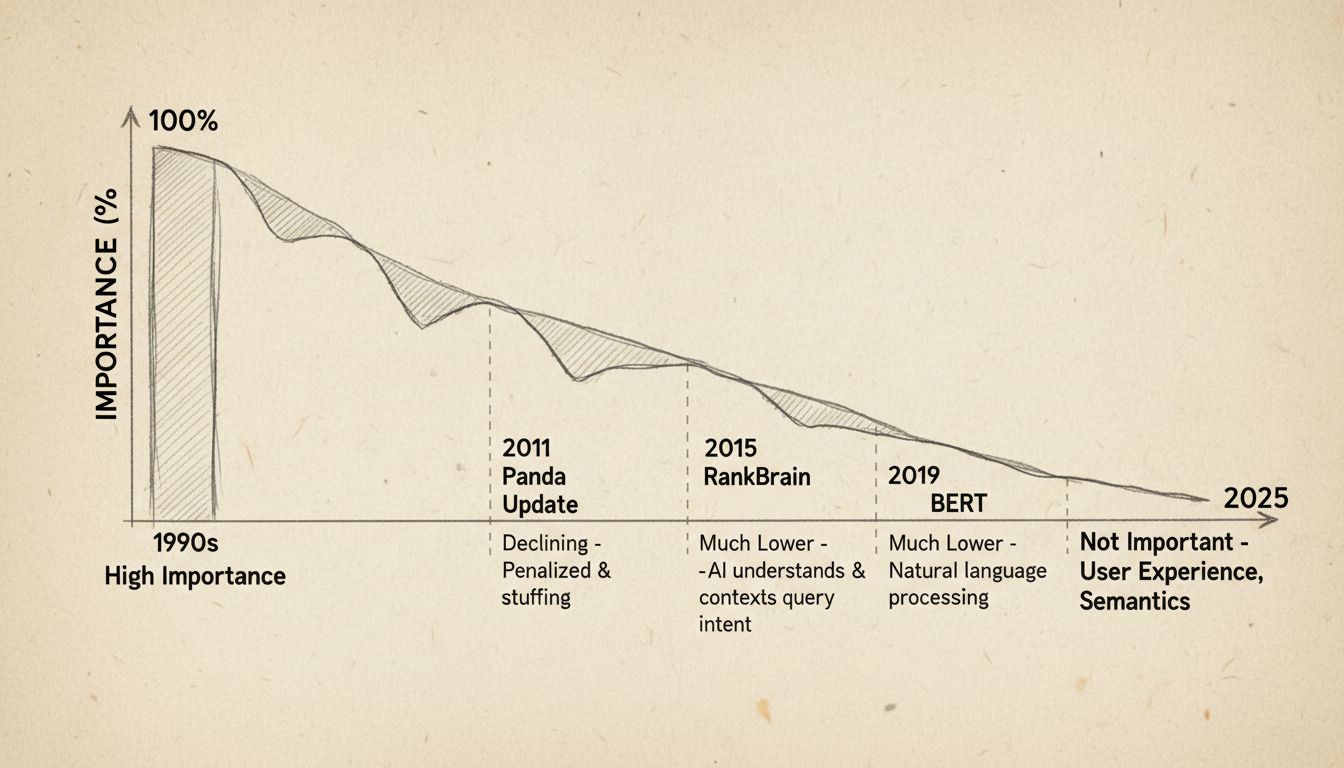

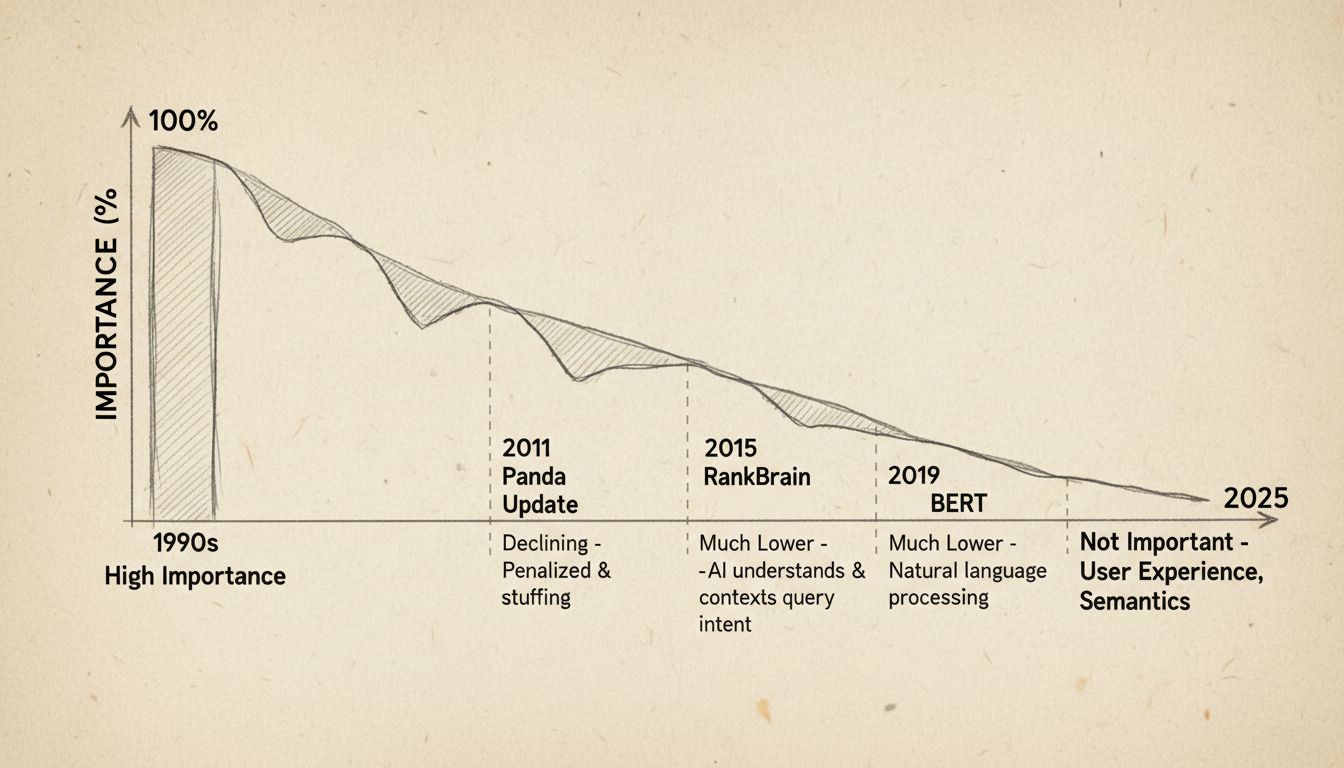

In the 1990s and early 2000s, keyword density was genuinely important for SEO because search engine algorithms were relatively primitive. Early search engines like AltaVista and the initial versions of Google relied heavily on on-page text analysis to determine what a webpage was about. If a page mentioned “best running shoes” ten times in a 500-word article, the algorithm could reasonably infer that the page was relevant for that search query. This direct correlation between keyword frequency and relevance made keyword density a logical and effective optimization tactic.

However, this simplicity created a significant problem: webmasters began exploiting keyword density through a practice called “keyword stuffing.” This involved inserting keywords unnaturally and excessively into content, often making pages unreadable and providing poor user experiences. Some practitioners even hid keywords using techniques like white text on white backgrounds or placing keyword lists in HTML comments. While these tactics temporarily boosted rankings, they degraded the quality of search results, which threatened Google’s core business model of providing users with the most relevant and helpful information.

Google’s response to keyword stuffing and low-quality content was a series of revolutionary algorithm updates that systematically dismantled the effectiveness of keyword density as a ranking factor. These updates represent a fundamental shift in how search engines understand and evaluate content.

In February 2011, Google introduced the Panda algorithm update, which was specifically designed to combat low-quality content and content farms. Panda introduced a site-wide quality filter that assessed whether content was valuable, original, and engaging. The update targeted websites that produced thin, duplicate, or poorly written content designed primarily to rank for keywords rather than to serve users. Pages with high keyword density but low-quality content suddenly saw dramatic drops in rankings, even if they technically had the “perfect” keyword density percentage.

Panda marked the first major blow against keyword density as a ranking factor. Google’s Matt Cutts, a senior engineer at the time, publicly stated that keyword density was not how the algorithm worked, effectively ending the debate for informed SEO professionals. The update forced the industry to shift focus from mechanical keyword metrics to more holistic concepts like content depth, originality, and user value.

The Hummingbird update, released in September 2013, represented an even more dramatic shift in search technology. Rather than simply matching individual keywords to pages, Hummingbird was designed to understand the meaning behind entire search queries and the semantic relationships between words. This update introduced “semantic search,” which means Google could now understand that queries like “what is the best place to eat Chinese food near me” were fundamentally about finding a nearby restaurant, not just matching the individual words in the query.

With semantic search, Google no longer needed to see an exact-match keyword repeated on a page to understand its topic. The algorithm could recognize that pages about “dog photos,” “pictures of dogs,” and “canine images” were all addressing the same user need. This technological leap made keyword density completely irrelevant because the algorithm could now match user intent to content meaning regardless of specific keyword frequency. Hummingbird officially marked the transition from keyword-focused SEO to topic-focused SEO.

Google’s RankBrain system, introduced in 2015, added another layer of sophistication by incorporating machine learning to understand search queries and user behavior. RankBrain was specifically designed to handle the approximately 15% of Google searches that had never been seen before. Rather than relying on pre-programmed rules, RankBrain learned from vast amounts of search data to make intelligent predictions about which pages would satisfy users.

Crucially, RankBrain incorporated user behavior signals as feedback to refine its understanding. Metrics like click-through rate, dwell time (how long users stay on a page), and pogo-sticking (immediately returning to search results) became powerful indicators of whether a page actually satisfied the user’s intent. If users consistently clicked on a particular result and stayed on that page, RankBrain learned that this page was likely a good answer, even if its keyword density didn’t match traditional optimization guidelines. This update made user satisfaction a machine-learned component of the ranking algorithm, further diminishing the importance of keyword frequency.

The final major evolution came with BERT (Bidirectional Encoder Representations from Transformers), integrated into Google’s algorithm in 2019. BERT is an advanced natural language processing model that understands the subtle nuances of language by analyzing the context of words in relation to all other words in a sentence, both before and after. This bidirectional analysis allows BERT to understand meaning with unprecedented accuracy.

Google provided a clear example: the query “2019 Brazil traveller to usa needs a visa” was previously misunderstood because the algorithm didn’t grasp the importance of the word “to” in determining directionality. With BERT, Google correctly understood that the query was about a Brazilian citizen traveling to the United States, not the reverse. An algorithm sophisticated enough to parse the meaning of a two-letter preposition has moved light-years beyond counting keyword occurrences. BERT represents the pinnacle of contextual understanding in search, making any focus on keyword density completely obsolete.

Modern search engines have evolved to prioritize factors that directly correlate with user satisfaction and content quality. Understanding these factors is essential for anyone creating content in 2025.

| SEO Factor | Importance Level | Why It Matters | How to Optimize |

|---|---|---|---|

| User Intent Alignment | Critical | Content must match what users are actually searching for | Research search queries and create content that answers the specific question users are asking |

| Content Quality & Depth | Critical | Comprehensive, well-researched content ranks better | Create detailed, authoritative content that covers topics thoroughly from multiple angles |

| E-E-A-T Signals | Critical | Experience, Expertise, Authoritativeness, and Trustworthiness are paramount | Demonstrate credentials, cite authoritative sources, and build domain authority |

| User Experience (UX) | Critical | Page speed, mobile-friendliness, and readability directly impact rankings | Optimize Core Web Vitals, ensure mobile responsiveness, use clear formatting |

| Topical Authority | High | Building comprehensive coverage of a topic signals expertise | Create topic clusters with pillar pages and related cluster content |

| Semantic Relevance | High | Using related terms and entities helps search engines understand context | Include synonyms, related concepts, and co-occurring entities naturally |

| Keyword Placement | Medium | Strategic placement in titles, headers, and first paragraph helps | Use keywords naturally in important locations without forcing them |

| Keyword Density | Low | No longer a primary ranking factor | Use keywords naturally; don’t calculate or target specific percentages |

The fundamental reason keyword density is no longer important relates to how modern search engines process information. Early search algorithms used simple text analysis: they counted keyword occurrences and calculated percentages because that was computationally efficient and provided a reasonable proxy for relevance. However, this approach had critical flaws that modern algorithms have overcome.

First, keyword density tells search engines nothing about the context in which keywords appear. A page could mention “running shoes” frequently but discuss them in a negative context, yet the algorithm would still consider it relevant. Second, keyword density fails to account for semantic relationships between words. A page about “canine companions” and one about “dog friends” might have identical keyword density for “dogs,” but they’re fundamentally different documents. Third, keyword density is easily manipulated through repetition, which is why it became a target for spam tactics.

Modern algorithms solve these problems through semantic understanding, entity recognition, and user behavior analysis. Instead of counting keywords, Google’s algorithms now understand the meaning of entire passages, recognize entities and their relationships, and learn from how real users interact with content. This represents a fundamental shift from mechanical text analysis to genuine language comprehension.

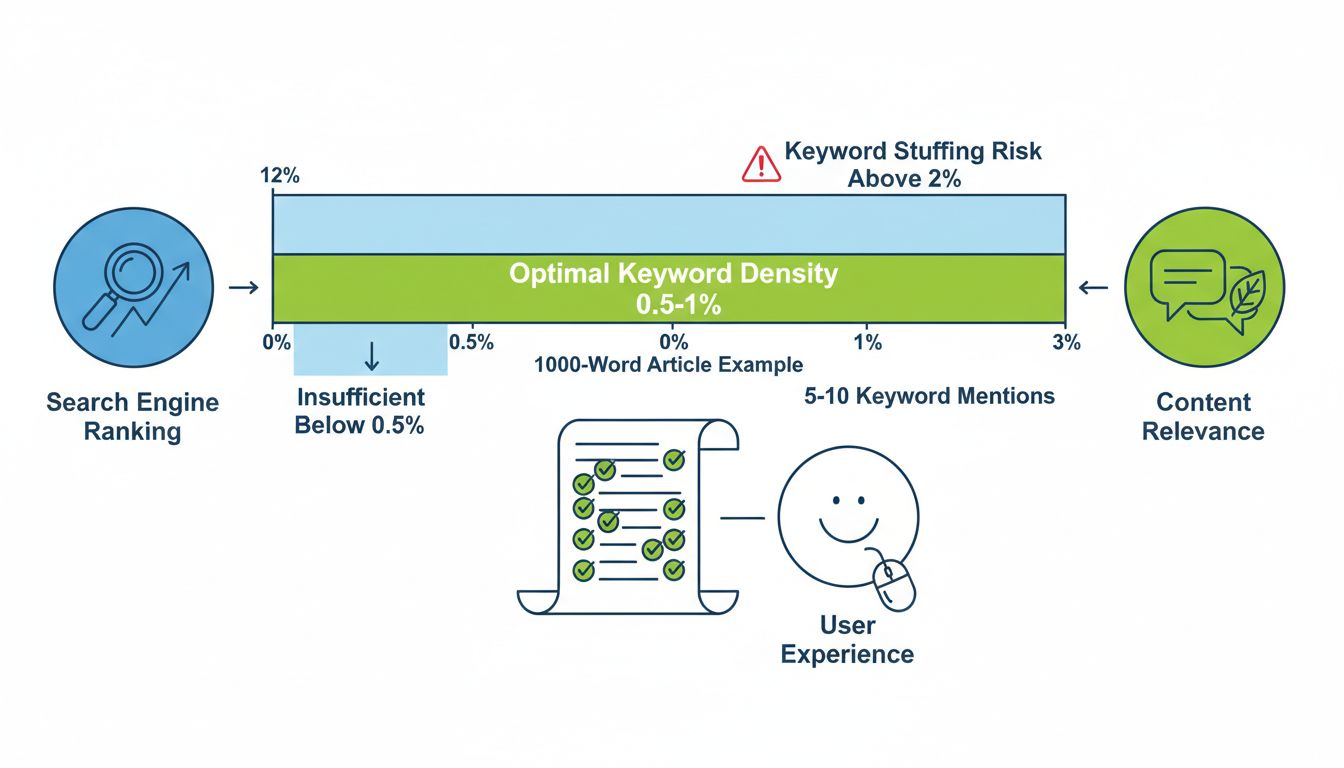

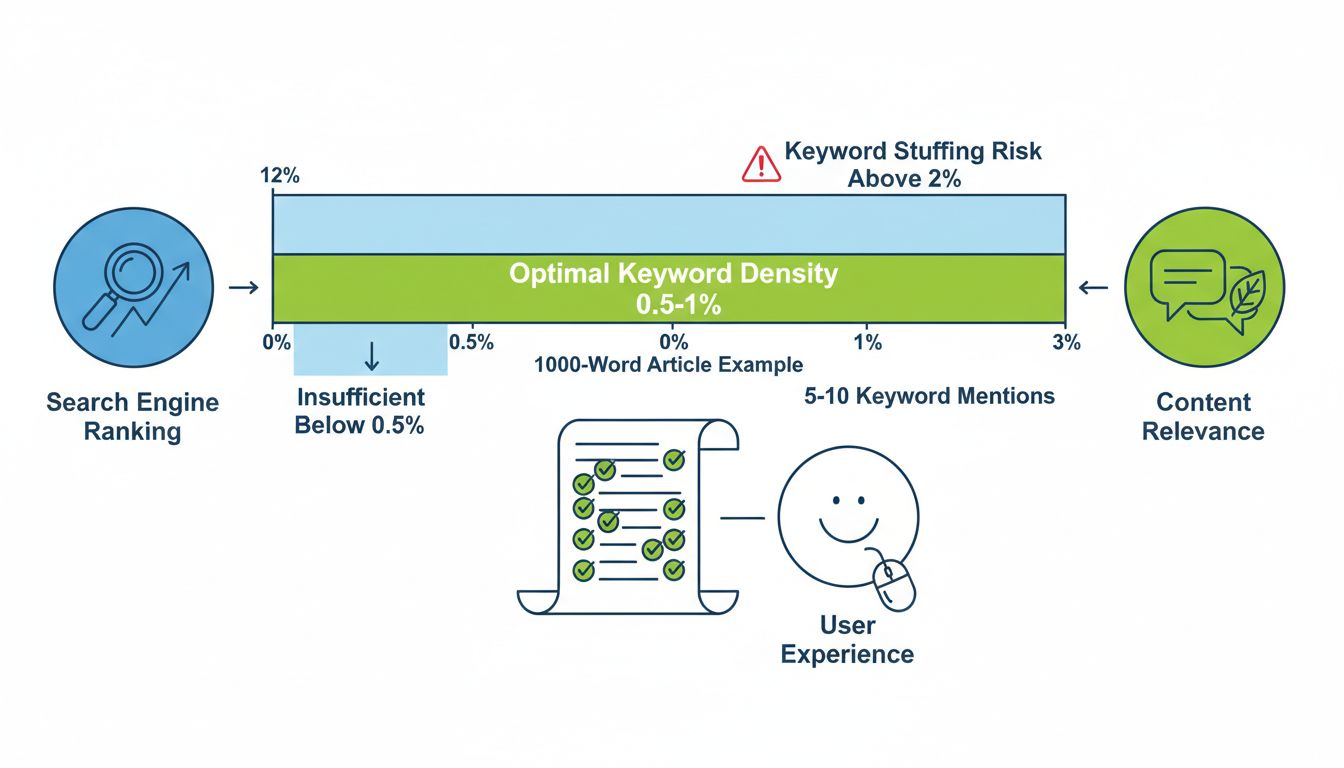

Industry research from 2025 suggests that the optimal keyword density is approximately 0.5% to 1%, meaning a keyword should appear 3 to 6 times in a 600-word article or 5 to 10 times in a 1,000-word article. However, this recommendation should not be interpreted as a target to achieve. Rather, it represents a natural frequency that emerges when writing quality content about a specific topic without forcing keyword repetition.

The key insight is that if you’re consciously trying to hit a specific keyword density percentage, you’re likely doing something wrong. High-ranking content typically achieves its keyword frequency naturally through comprehensive topic coverage, not through deliberate keyword insertion. Content that ranks #1 on Google for competitive keywords typically does so because it provides the most comprehensive, authoritative, and user-friendly answer to the search query, not because it hit a specific keyword density target.

Rather than obsessing over keyword density, successful SEO in 2025 requires a holistic approach that addresses multiple dimensions of content quality and relevance.

Focus on User Intent: Before writing any content, thoroughly research what users are actually searching for and what they expect to find. Are they looking for information, trying to navigate to a specific website, investigating a purchase decision, or ready to buy? Your content should directly address the specific intent behind the search query.

Create Comprehensive Content: Write content that thoroughly covers your topic from multiple angles. Include relevant subtopics, answer common questions, and provide more depth than competing pages. Longer, well-structured content that comprehensively addresses a topic tends to rank better than shorter, superficial content, regardless of keyword density.

Build Topical Authority: Rather than creating isolated pages optimized for individual keywords, develop a content architecture where multiple related pages link to each other in a logical structure. This “topic cluster” approach signals to search engines that your website has deep expertise in a subject area.

Optimize for User Experience: Ensure your pages load quickly, work perfectly on mobile devices, and are easy to read. Use clear headings, short paragraphs, bullet points, and visual elements to break up text. These user experience factors directly impact both search rankings and conversion rates.

Demonstrate E-E-A-T: Show your expertise through detailed knowledge, cite authoritative sources, include author credentials, and build trust through transparent, accurate information. For topics related to health, finance, or legal matters, demonstrating formal qualifications is particularly important.

Use Keywords Naturally: Include your target keyword in strategic locations like the title tag, main heading, and first paragraph, but only where it makes sense. Use synonyms and related terms throughout your content to provide semantic richness without repetition.

The evolution of search engine technology has definitively proven that keyword density is not a meaningful SEO metric. Google’s own engineers have stated this clearly, and years of algorithmic updates have systematically eliminated any ranking benefit from hitting specific keyword density percentages. Attempting to optimize for keyword density in 2025 is like trying to optimize for a metric that search engines stopped using over a decade ago.

However, this does not mean keywords are unimportant. Keywords remain essential for helping search engines understand what your content is about and for matching your content to relevant search queries. The difference is that modern search engines understand keywords in context, recognize synonyms and related terms, and prioritize user satisfaction over keyword frequency.

The most effective SEO strategy is to stop thinking about keyword density entirely and instead focus on creating the highest-quality, most comprehensive, most user-friendly content possible for your target audience. Write naturally, cover your topic thoroughly, demonstrate expertise and trustworthiness, and optimize for user experience. When you do these things well, appropriate keyword usage will happen naturally, and your content will rank better than pages that were optimized for outdated metrics like keyword density.

PostAffiliatePro recognizes that modern affiliate marketing success depends on content that ranks well and converts visitors into active affiliates. Our platform provides the tools you need to track which content drives the most valuable affiliate traffic, allowing you to focus your efforts on creating the types of content that actually generates results. By combining PostAffiliatePro’s advanced tracking and analytics with modern SEO best practices focused on quality and user intent, you can build a sustainable, high-performing affiliate program.

Stop obsessing over keyword percentages and start building topical authority with PostAffiliatePro's advanced content management and affiliate tracking capabilities. Our platform helps you create high-quality, user-focused content that ranks better and converts more visitors into affiliates.

Learn how keyword density impacts affiliate site SEO in 2025. Discover optimal keyword density ranges, best practices, and how to avoid penalties while maintain...

Discover the truth about keyword density in modern SEO. Learn why there's no ideal percentage, how search engines evolved, and best practices for content optimi...

Learn about optimal keyword density for SEO. Discover the recommended 1-2% range, how to calculate it, and why natural keyword placement matters more than ever ...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.