How Does Latent Semantic Indexing Affect SEO? Complete 2025 Guide

Learn how Latent Semantic Indexing impacts SEO in 2025. Discover how LSI keywords improve search rankings, content relevance, and organic traffic while reducing...

Discover whether Google uses LSI keywords and learn how modern semantic search actually works. Understand BERT, RankBrain, and entity-based optimization for better rankings.

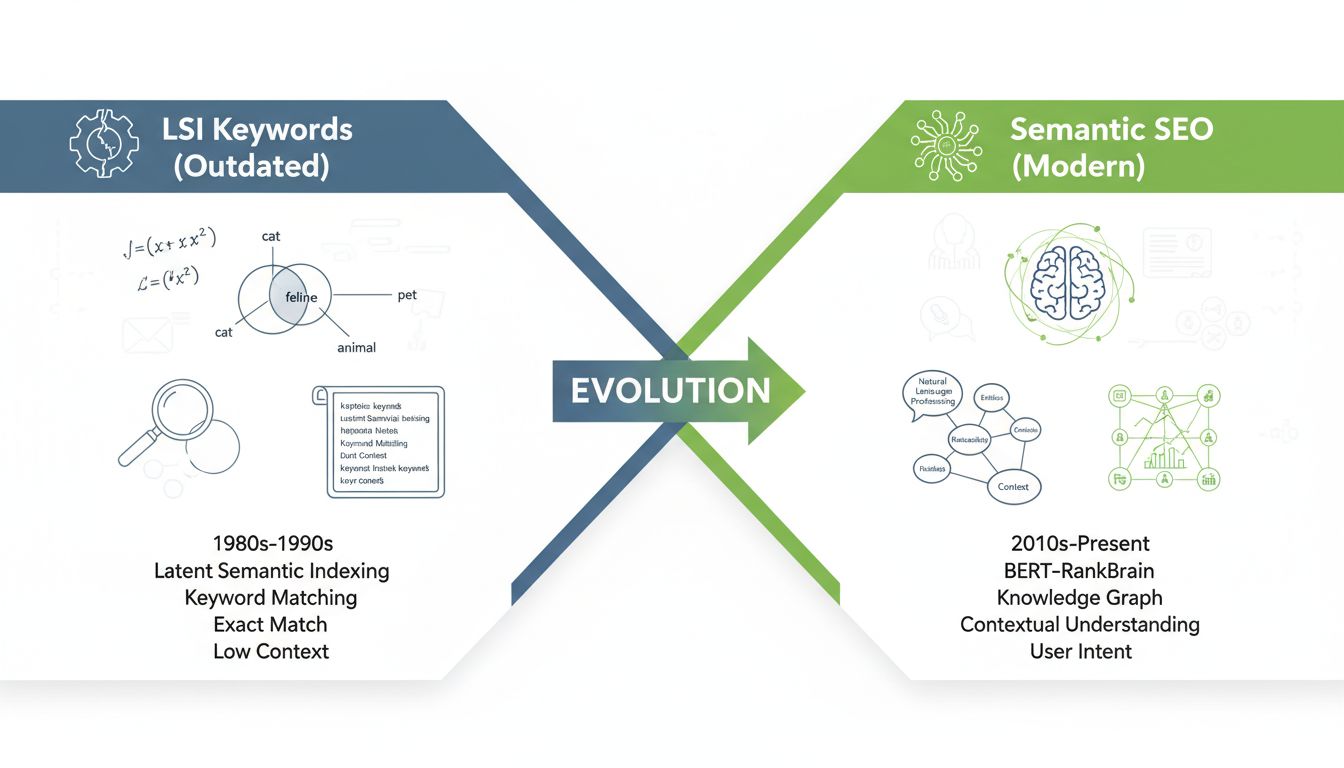

No, Google does not use LSI (Latent Semantic Indexing) keywords. Google's John Mueller has confirmed multiple times that LSI is an outdated 1980s technology that Google never adopted. Instead, Google uses advanced semantic search technologies like BERT, RankBrain, and Knowledge Graph to understand content meaning and context.

The term “LSI keywords” has persisted in SEO circles for over a decade, creating widespread confusion about how Google actually ranks content. Many marketers still believe that including lists of semantically related terms is a ranking factor, when in reality, Google abandoned this approach long before the term became popular in SEO communities. Understanding the difference between outdated LSI theory and modern semantic search is crucial for anyone managing affiliate programs or creating content that needs to rank well in 2025.

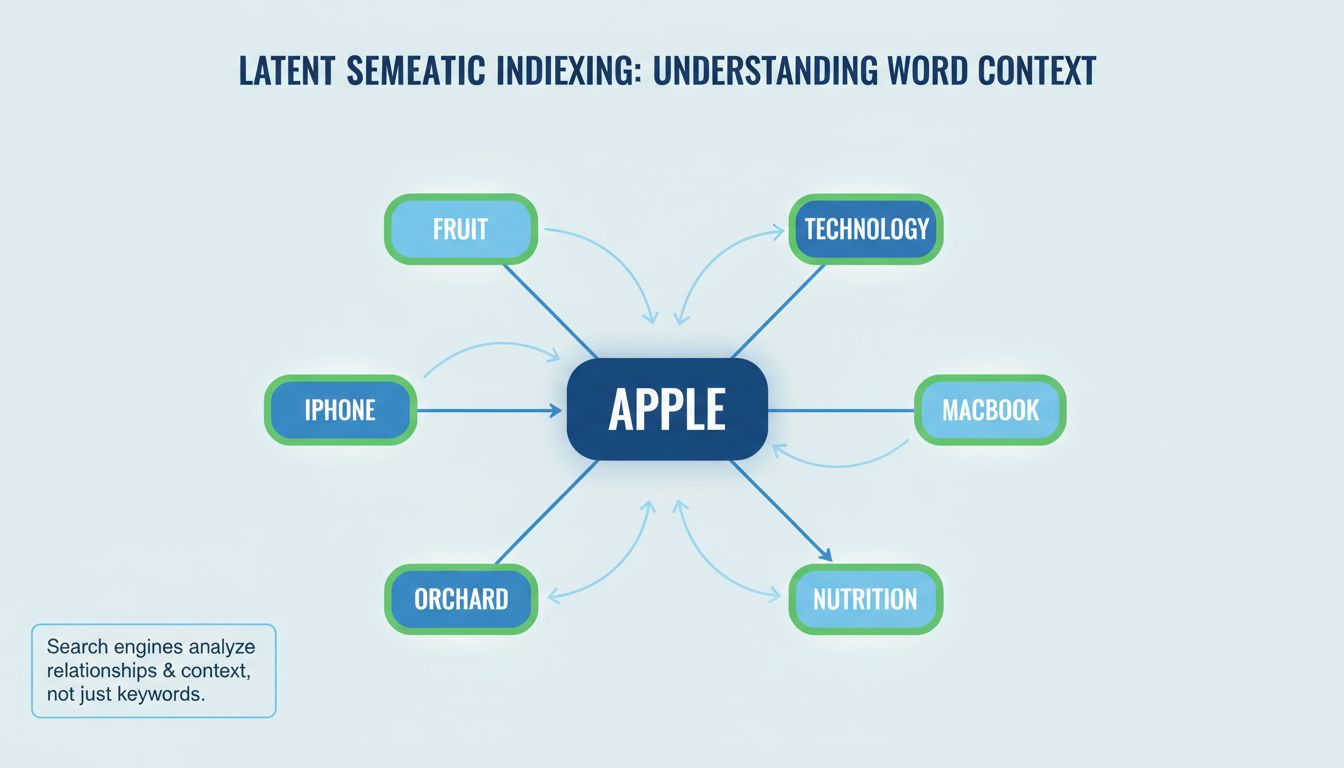

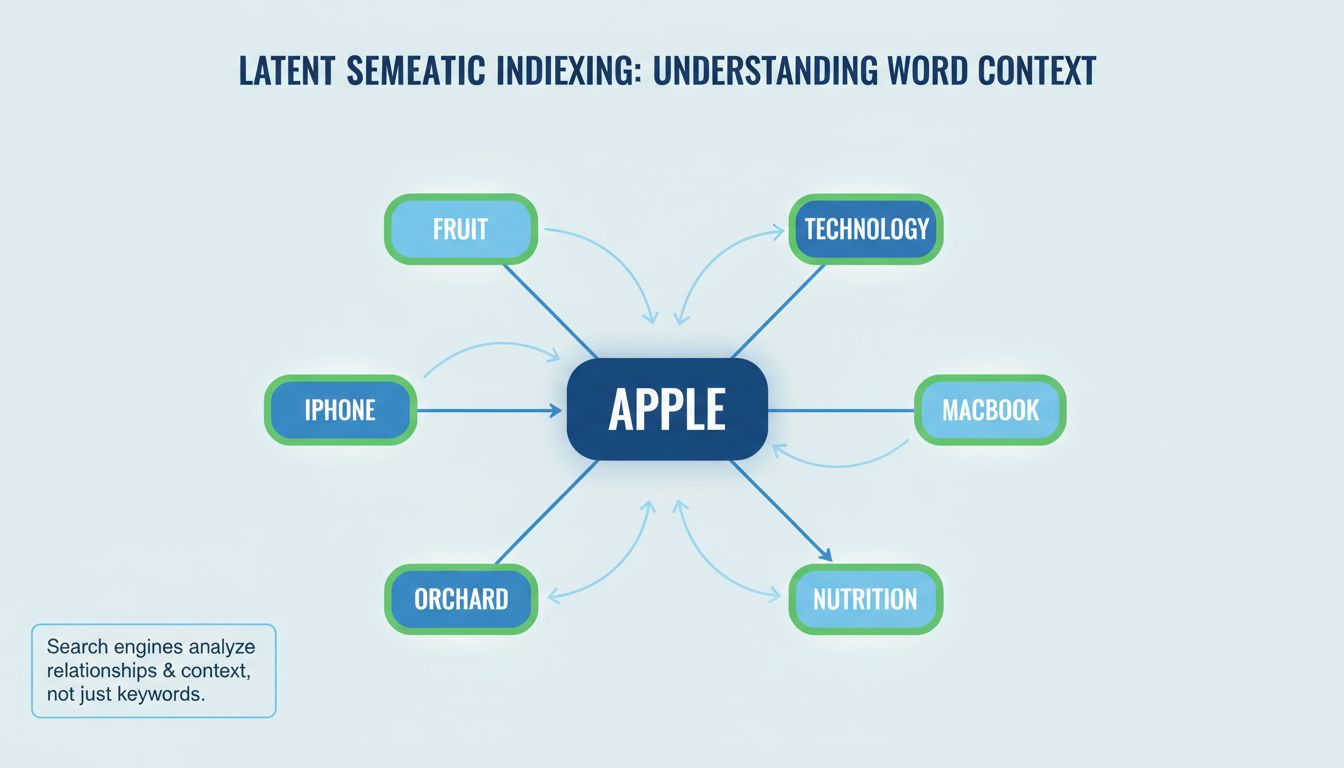

Latent Semantic Indexing was a mathematical technique developed in the late 1980s to analyze relationships between words in document sets. The method used singular value decomposition to identify hidden patterns in how terms co-occurred, allowing early search systems to understand that “apple” could refer to either the fruit or the technology company depending on surrounding context. While this was innovative for its time, it was never designed to scale to billions of web pages, and Google explicitly chose not to implement it in their ranking algorithms.

Google has evolved far beyond simple keyword matching or LSI-style analysis. The search giant now employs sophisticated natural language processing systems that understand meaning at a level that would have been impossible with 1980s technology. The most significant advancement came with BERT (Bidirectional Encoder Representations from Transformers), which Google introduced in 2019. BERT analyzes words in context by looking at the words that come before and after them, understanding nuances like prepositions and qualifiers that completely change meaning.

RankBrain, Google’s machine learning system introduced in 2015, handles the interpretation of search queries and matches them to relevant content based on meaning rather than exact keyword matches. This system learns from billions of searches to understand patterns in how people search and what content actually satisfies their needs. MUM (Multitask Unified Model), Google’s more recent advancement, extends this capability to handle complex, multi-step queries and even multimodal inputs combining text and images.

The Knowledge Graph represents another crucial component of modern semantic understanding. This massive database maps entities—people, places, products, concepts—and their relationships to one another. When Google processes a search query, it doesn’t just look for pages containing specific words; it identifies the entities involved and finds content that discusses those entities in relevant ways. This entity-based approach is fundamentally different from LSI, which focused on word co-occurrence patterns rather than understanding what things actually are.

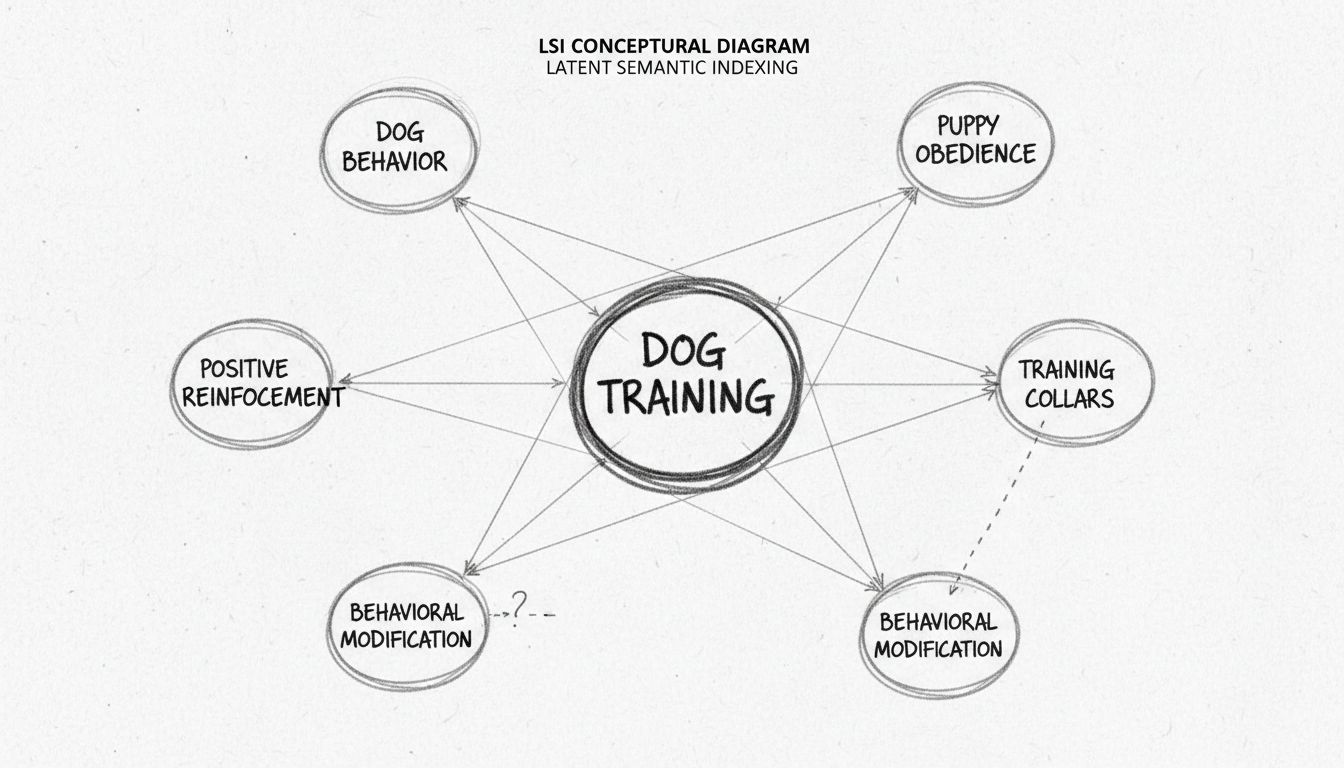

Many people use the terms “LSI keywords” and “semantic keywords” interchangeably, but this conflation obscures an important distinction. LSI keywords refer to a specific mathematical algorithm that Google never used at scale. Semantic keywords, by contrast, describe words and phrases that are contextually related to your main topic and help search engines understand the full scope of what your content covers. The confusion arose because SEO tools needed a way to describe related terms, and “LSI keywords” became convenient shorthand, even though it was technically inaccurate.

The practical difference matters significantly for content strategy. Chasing LSI keyword lists often leads to forced, unnatural writing where creators try to include every suggested term regardless of whether it fits the content naturally. This approach can actually harm rankings because modern search engines penalize content that feels artificially optimized or lacks genuine coherence. Semantic SEO, by contrast, focuses on understanding your topic deeply and explaining it clearly, which naturally incorporates the contextual vocabulary that search engines recognize as signals of expertise and comprehensiveness.

| Aspect | LSI Keywords | Semantic SEO |

|---|---|---|

| Technology Used | 1980s mathematical algorithm | Modern NLP, BERT, RankBrain, Knowledge Graph |

| Google Implementation | Never used at scale | Core ranking system |

| Optimization Method | Keyword list insertion | Comprehensive topic coverage |

| Writing Quality | Often forced and unnatural | Natural, reader-focused |

| Ranking Impact | None (direct) | Significant (indirect through clarity) |

| Entity Recognition | Not applicable | Central to understanding |

| User Intent Matching | Limited | Advanced interpretation |

When Google processes a web page, it doesn’t simply count keyword occurrences or look for predetermined lists of related terms. Instead, it analyzes the entire page to understand what it’s actually about, what entities it discusses, and how well it addresses user needs. This interpretation happens through multiple layers of analysis that work together to create a comprehensive understanding of content meaning.

Natural language processing allows Google to parse sentence structure, identify grammatical relationships, and understand how words modify each other. This is why a page about “apple pie recipes” is understood differently from a page about “apple computer specifications,” even though both contain the word “apple.” The surrounding context—words like “recipe,” “baking,” “ingredients” versus “computer,” “software,” “processor”—provides the semantic signals that distinguish these topics.

Entity recognition takes this further by identifying specific things mentioned in the content. When Google reads about “Steve Jobs,” it doesn’t just see a name; it recognizes this as a reference to a specific person with particular attributes, relationships, and historical significance. The Knowledge Graph then connects this entity to related concepts like Apple Inc., innovation, technology, and entrepreneurship. This network of relationships helps Google understand not just what a page is about, but how it fits into the broader landscape of knowledge.

Google’s search advocates have been remarkably clear about their position on LSI keywords. In 2019, John Mueller, Google’s Search Advocate, stated directly on Twitter: “There’s no such thing as LSI keywords – anyone who’s telling you otherwise is mistaken, sorry.” This wasn’t a casual comment but a deliberate clarification addressing a persistent misconception in the SEO industry. Mueller has reiterated this position multiple times since then, making it clear that Google’s ranking systems do not use latent semantic indexing in any form.

The reason for this rejection is straightforward: LSI was designed for small, static document collections in controlled environments. It was never built to handle the scale, diversity, and dynamism of the modern web. Google’s founders recognized early on that LSI-style approaches wouldn’t work for ranking billions of pages across countless topics and languages. Instead, they invested in machine learning systems that could learn from real user behavior and adapt to new patterns continuously.

Furthermore, LSI’s mathematical approach to finding semantic relationships is crude compared to what neural networks can accomplish. A neural network trained on billions of documents can learn far more nuanced relationships between concepts than a mathematical model analyzing term co-occurrence. This is why BERT and similar systems are so much more effective at understanding language nuance, context, and meaning than any algorithm from the 1980s could ever be.

While LSI keywords themselves don’t influence rankings, the underlying principle—that search engines care about semantic relationships and contextual meaning—absolutely does affect how pages rank. The difference is that modern semantic understanding is far more sophisticated and operates at a deeper level than simple keyword matching or term co-occurrence analysis. When you write content that thoroughly covers a topic and explains concepts clearly, you’re naturally creating the semantic signals that modern search engines recognize and reward.

Comprehensive topic coverage is one of the most important semantic signals. When a page addresses a subject from multiple angles, includes supporting concepts, and explains relationships between ideas, search engines interpret this as a sign of expertise and usefulness. This is why long-form content often outranks shorter pieces—not because length itself is a ranking factor, but because depth allows for more complete semantic expression. A 500-word article about “coffee brewing methods” might mention espresso, pour-over, and French press, but a 3,000-word comprehensive guide can explore each method in detail, discuss the science of extraction, explain equipment choices, and address common mistakes. The longer piece naturally incorporates more semantic signals because it covers the topic more thoroughly.

Entity clarity is another crucial semantic signal. When you clearly define the entities your content discusses and explain their relationships, you help search engines understand your content’s meaning. If you’re writing about affiliate marketing software, clearly distinguishing between different platforms, explaining what makes each unique, and discussing how they relate to different business models provides semantic clarity that helps Google understand your content’s scope and relevance.

Understanding that Google doesn’t use LSI keywords but does care deeply about semantic meaning should fundamentally change how you approach content creation. Rather than searching for LSI keyword lists and trying to force them into your writing, focus on understanding your topic deeply and explaining it clearly. This approach produces better content for readers and better signals for search engines simultaneously.

Start by researching what questions your audience actually asks about your topic. Use Google’s “People Also Ask” section, search autocomplete suggestions, and tools like AnswerThePublic to understand the full landscape of user intent. These sources reveal the semantic relationships that matter to real searchers, not theoretical keyword lists. When you structure your content to answer these questions comprehensively, you naturally incorporate the vocabulary and concepts that search engines recognize as relevant.

Study the top-ranking pages for your target keywords, but don’t analyze them to extract LSI keyword lists. Instead, examine how they structure information, what subtopics they cover, what entities they discuss, and how they explain relationships between concepts. This analysis reveals the semantic scope that Google expects for your topic. If every top-ranking page discusses “affiliate commission structures,” “payment processing,” and “fraud prevention” alongside your main topic, these aren’t LSI keywords you need to force in—they’re essential components of comprehensive coverage that you should address naturally.

Despite years of clarification from Google, several myths about LSI continue to circulate in SEO communities and training materials. One persistent misconception is that LSI keyword tools provide some special insight into how Google ranks content. In reality, these tools simply show related search terms and synonyms—information you can find for free using Google’s own search features. The “LSI” branding is pure marketing; the tools don’t perform any latent semantic indexing analysis.

Another common myth suggests that you need to include a specific number or percentage of LSI keywords for optimal rankings. This idea leads to formulaic, unnatural writing that actually harms content quality. Modern search engines evaluate content holistically, not by checking off keyword requirements. A page that naturally incorporates relevant concepts while maintaining excellent readability and user experience will outrank a page that artificially includes more keywords but reads awkwardly.

Some marketers still believe that LSI keywords can compensate for thin or low-quality content. This is fundamentally false. Adding related terms to shallow content doesn’t make it comprehensive or authoritative. Search engines evaluate the actual depth of explanation, the quality of information, and whether the content genuinely addresses user needs. No amount of keyword variation can substitute for genuine expertise and thorough coverage.

Rather than chasing outdated SEO myths, successful affiliate program managers need tools that help them understand what actually drives conversions and engagement. PostAffiliatePro provides comprehensive analytics and tracking that shows you exactly which content strategies, affiliate partners, and marketing approaches generate real results. Instead of guessing whether your semantic optimization is working, you can see concrete data about traffic sources, conversion rates, and revenue attribution.

PostAffiliatePro’s advanced reporting capabilities help you understand the relationship between your content strategy and actual business outcomes. You can track which pages drive the most qualified traffic, which affiliate partners generate the highest-value conversions, and how different content approaches impact your bottom line. This data-driven approach is far more effective than following generic SEO advice, because it’s based on your specific audience, market, and business model.

The platform’s real-time tracking and detailed analytics also help you identify opportunities for optimization that generic SEO tools might miss. You can see exactly how users interact with your content, where they drop off, and what messaging resonates most strongly. This insight allows you to refine your content strategy based on actual user behavior rather than theoretical best practices.

The persistence of LSI keyword discussions in 2025 represents a disconnect between outdated SEO theory and modern search reality. Google has been clear and consistent: LSI keywords are not part of their ranking systems. What matters instead is semantic understanding—the ability of search engines to comprehend what your content is actually about, what entities it discusses, and how well it addresses user needs.

For affiliate marketers and content creators, this clarity should be liberating. Rather than spending time researching LSI keyword lists and trying to force them into your writing, invest that effort in understanding your topic deeply and explaining it clearly. Write for your audience first, optimize for search engines second, and trust that comprehensive, well-structured content will naturally incorporate the semantic signals that modern search engines recognize and reward.

The future of SEO isn’t about keyword tricks or mechanical optimization—it’s about creating genuinely useful content that demonstrates expertise and addresses real user needs. By focusing on semantic clarity, comprehensive topic coverage, and entity-based understanding, you’ll create content that ranks well not just in Google’s traditional search results, but also in AI-generated answers and emerging search platforms. That’s the real competitive advantage in 2025 and beyond.

Stop guessing about SEO and start tracking what actually works. PostAffiliatePro's advanced analytics help you understand which content strategies drive real affiliate conversions. Get the insights you need to build a profitable affiliate program.

Learn how Latent Semantic Indexing impacts SEO in 2025. Discover how LSI keywords improve search rankings, content relevance, and organic traffic while reducing...

Discover the truth about LSI keywords in 2025. Learn why Google doesn't use LSI, what actually matters for SEO rankings, and how to optimize content with semant...

Discover how Latent Semantic Indexing (LSI) enhances your content's contextual relevance, improves search engine rankings, and drives more organic traffic to yo...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.