How Does Split Testing Work? Complete Guide to A/B Testing

Learn how split testing works with our comprehensive guide. Discover the methodology, statistical significance, best practices, and how PostAffiliatePro helps o...

Learn what Facebook split testing (A/B testing) is and how to use it to optimize your ad campaigns. Discover best practices, setup methods, and proven strategies to increase ROI.

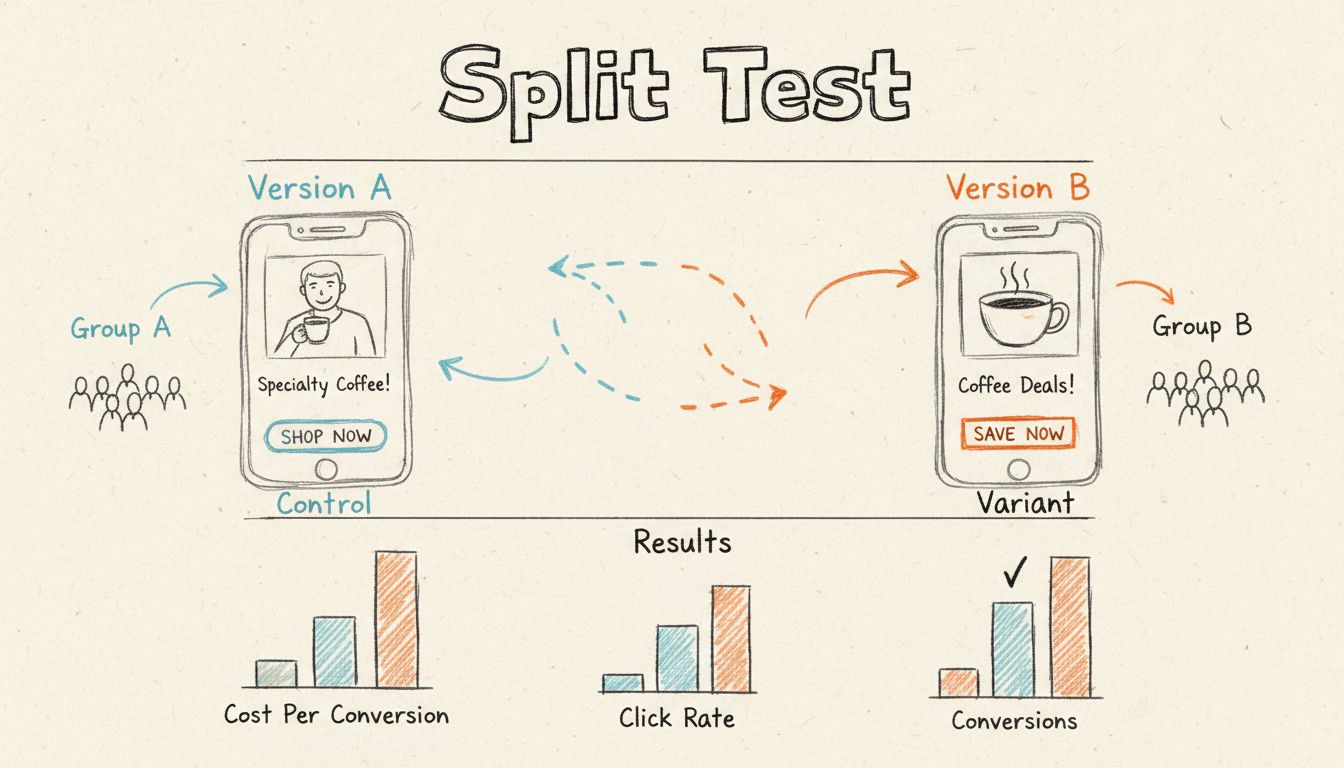

A split test on Facebook is an experiment where two or more variants of a Facebook ad are shown to different groups of people to determine which version is more effective in driving desired outcomes like clicks, conversions, or purchases.

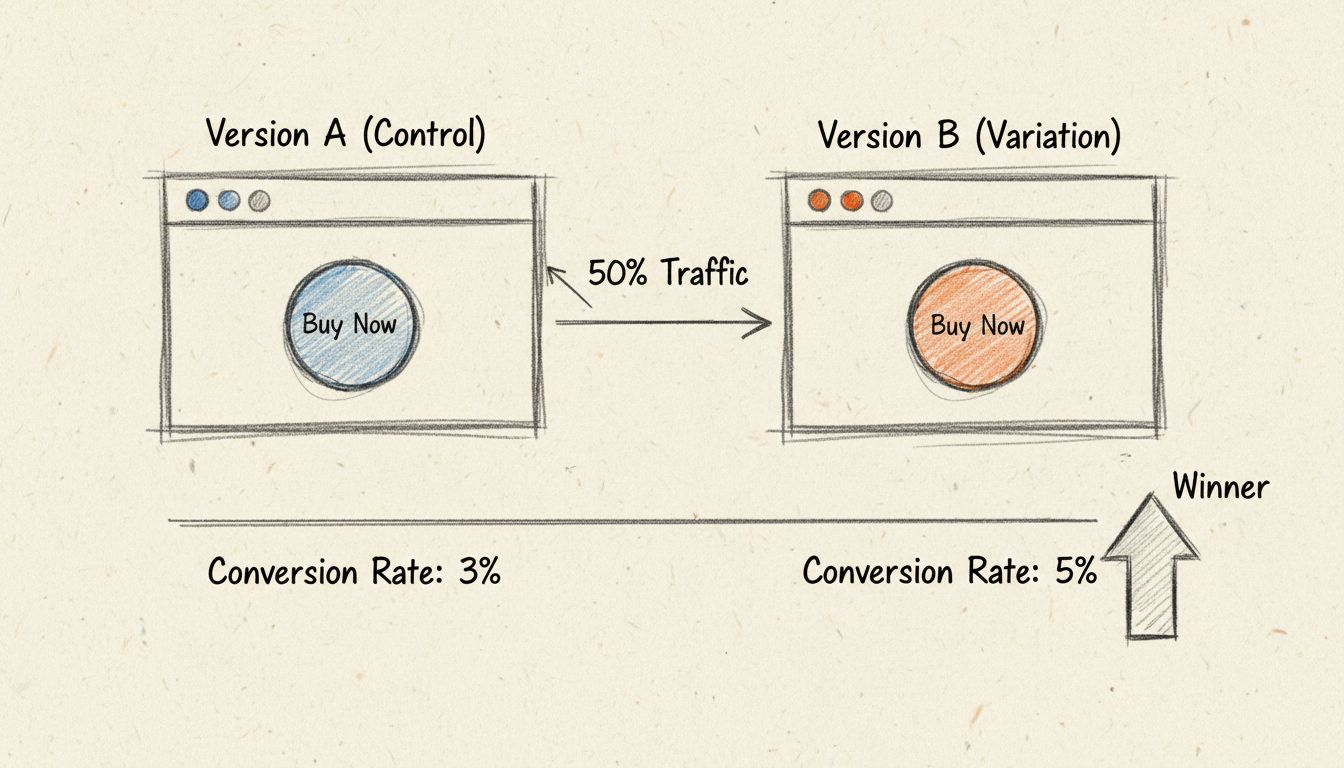

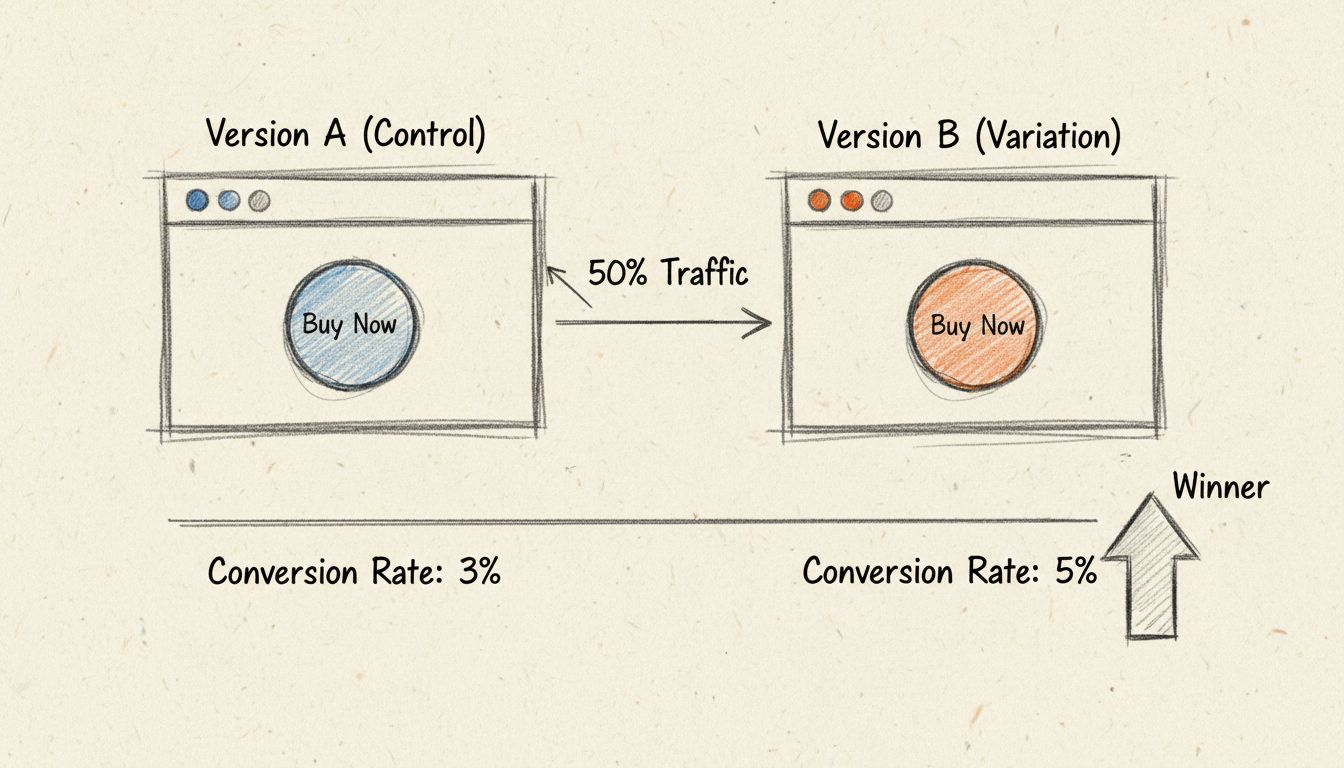

A split test, also known as A/B testing or bucket testing, is a controlled experiment that compares the performance of two or more versions of a Facebook ad to determine which one delivers superior results. This methodology isolates a single variable at a time—such as ad creative, audience targeting, placement, or delivery optimization—and measures how that specific change impacts your campaign’s key performance indicators. By running these experiments systematically, advertisers can make data-driven decisions rather than relying on assumptions or personal preferences about what might work best with their target audience.

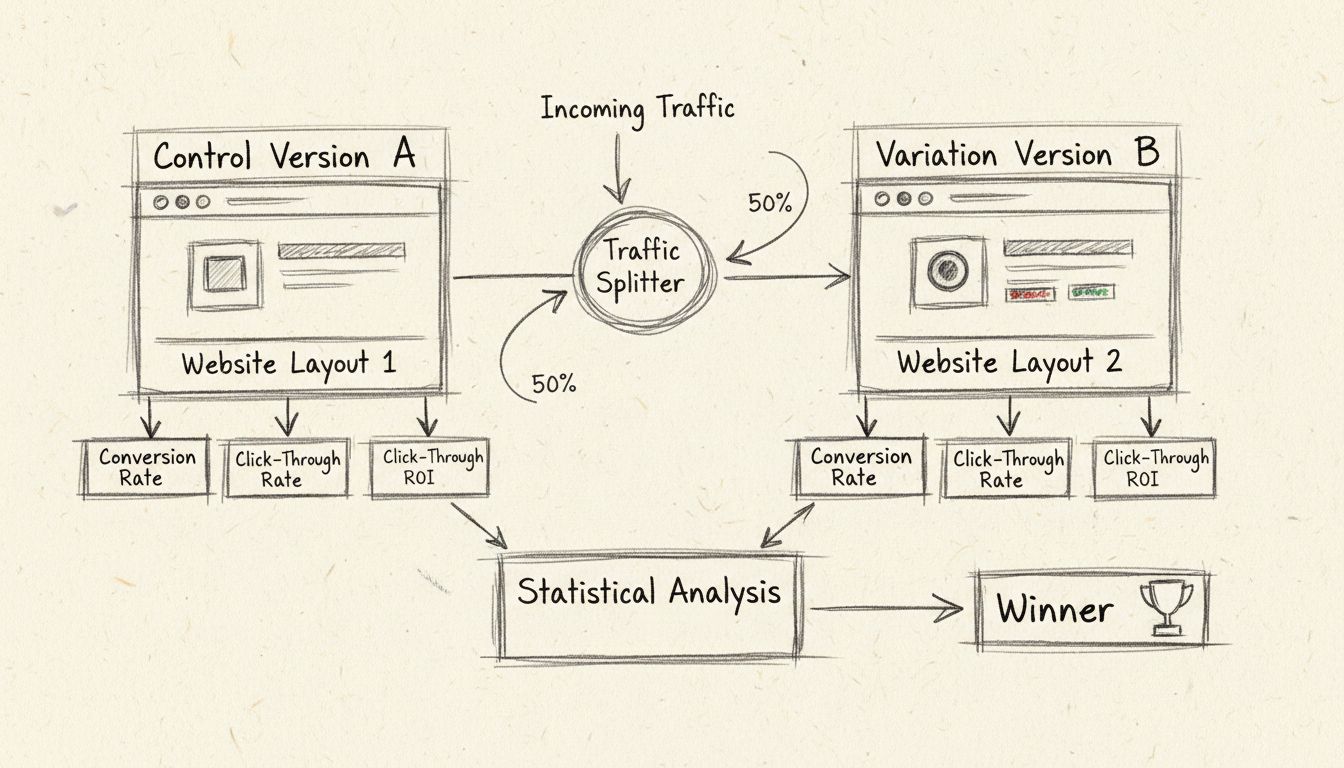

The fundamental principle behind split testing is statistical validation. When you run a split test on Facebook, the platform automatically divides your audience into separate, randomly assigned groups to ensure each variation receives equal exposure and unbiased data collection. This scientific approach eliminates the risk of audience overlap and provides reliable insights into which ad version will perform better when scaled across your entire marketing budget. The results are typically presented with a confidence level, indicating the probability that you would achieve the same results if you ran the identical test again.

Facebook’s split testing tool operates within the Ads Manager and requires you to select the split test option during campaign creation—you cannot add split testing to an existing campaign. Once you’ve chosen your campaign objective, you must specify which variable you want to test. The platform then creates separate ad sets for each variation, ensuring that your budget is distributed evenly (or according to your weighted split preference) across all test variations. This structural approach guarantees that each ad receives sufficient impressions and interactions to generate statistically significant data.

The algorithm behind Facebook’s split testing is designed to minimize bias and maximize data accuracy. Rather than allowing Facebook’s optimization algorithm to favor one ad over another based on early performance signals, the split test maintains equal budget allocation throughout the test period. This means that even if one variation appears to be performing better after just a few days, it won’t receive disproportionate budget allocation, which could skew your results. The test runs for your specified duration, and at the end, Facebook calculates which variation achieved the lowest cost per result based on your campaign objective.

| Test Variable | Description | Best Use Case |

|---|---|---|

| Creative | Different ad images, videos, copy, headlines, and calls-to-action | Testing which visual or messaging approach resonates most with your audience |

| Audience | Different demographic segments, interests, behaviors, or custom audiences | Identifying which audience segment has the highest conversion value |

| Placements | Automatic placements versus specific placements (Feed, Stories, Reels, etc.) | Determining which ad placement generates the best ROI |

| Delivery Optimization | Different bidding strategies and optimization goals (clicks, conversions, engagement) | Finding the most cost-effective optimization method for your objective |

| Product Set | Different product catalogs or collections (for e-commerce) | Identifying which product assortment drives the most sales |

Each of these variables can dramatically impact your campaign performance. For instance, testing creative variations can reveal that a simple, minimalist design outperforms a complex, feature-rich design by 143%, as demonstrated in real-world case studies. Similarly, audience testing might show that a specific demographic segment has a cost per conversion that’s 50% lower than your broader audience, allowing you to refine your targeting strategy significantly.

Split testing is not merely a nice-to-have feature—it’s a fundamental requirement for optimizing Facebook advertising performance and maximizing return on ad spend. Without split testing, you’re essentially guessing which elements of your campaigns work best, and those guesses are often wrong. Research shows that split testing can increase ROI by up to 10 times when executed properly, transforming underperforming campaigns into highly profitable marketing channels. The data-driven insights you gain from split tests inform not only your immediate campaign optimization but also your broader marketing strategy and creative development process.

Beyond immediate performance improvements, split testing provides invaluable insights into your audience’s preferences and behaviors. When you discover that your audience responds better to storytelling-based copy than feature-focused copy, or that video content outperforms static images by a significant margin, you’ve gained knowledge that extends far beyond a single campaign. These insights can be applied to your email marketing, landing pages, social media content, and other marketing channels, creating a compounding effect on your overall marketing effectiveness.

Creating a split test in Facebook Ads Manager requires a strategic approach. First, you must establish a clear hypothesis about what you want to test and why. Rather than testing random variations, successful split testing starts with a specific question: “Will video ads generate a lower cost per conversion than image ads?” or “Does our 25-34 age demographic convert at a higher rate than our 35-44 demographic?” This hypothesis-driven approach ensures that your test results will provide actionable insights.

Next, you need to determine your budget allocation. A meaningful split test requires sufficient data collection, which means each variation must generate at least 10-20 conversions before you can draw reliable conclusions. If your average cost per conversion is $5, and you’re testing five different ad creatives, you’ll need a minimum budget of $250-500 to gather statistically significant data. However, more budget is always better for split testing, as it reduces the time required to reach statistical significance and provides more robust data.

Before launching your split test, you must define which metrics will determine success or failure. The most commonly used metrics include cost per result (CPR), cost per click (CPC), cost per conversion (CPA), click-through rate (CTR), and return on ad spend (ROAS). However, selecting the right metric depends entirely on your business goals and campaign objective. For most businesses, cost per conversion is an excellent starting point because it directly correlates with profitability and business growth.

Advanced advertisers often track revenue generated by each conversion and use ROAS as their primary metric, as this accounts for the actual profit impact of each ad variation. If you’re running a lead generation campaign, you might focus on cost per lead instead. The key principle is to select a single primary metric for your initial split tests, as monitoring multiple metrics simultaneously can produce confusing or contradictory results. For example, an ad with an excellent click-through rate might have a terrible cost per conversion, indicating that while the ad attracts clicks, those clicks don’t convert into valuable actions.

The way you structure your split test within Facebook’s campaign hierarchy significantly impacts data reliability. When testing creative variations (images, copy, headlines), you should create multiple ads within the same ad set, as they’ll share the same audience and targeting parameters. However, when testing audience segments or placements, you should create separate ad sets for each variation, allowing you to control budget allocation and ensure each segment receives equal exposure.

One critical consideration is avoiding budget concentration. Facebook’s algorithm can be aggressive in allocating budget to the ad it perceives as “winning,” which means one variation might receive three times more impressions than another, skewing your results. To prevent this, some advanced marketers create individual ad sets for each creative variation with equal budgets, ensuring perfectly balanced data collection. While this approach increases overall costs due to multiple ad sets competing for the same audience, it provides the most scientifically accurate results.

Many advertisers make critical errors when implementing split tests that compromise data quality. The most common mistake is stopping a test too early, often after just a few hours or days when one variation appears to be significantly outperforming the others. In reality, performance can shift dramatically over time, and what appears to be a clear loser in the first 24 hours might become the winner by day seven. Facebook recommends running split tests for at least 4-14 days to account for daily variations in audience behavior and to ensure sufficient data accumulation.

Another frequent error is overtesting your audience through excessive segmentation. When you create too many audience segments—for example, testing 2 genders × 5 interests × 5 age ranges = 50 different ad sets—you end up with extremely small audience segments that become expensive to reach. Facebook must work harder to find users matching very specific criteria, driving up your cost per impression and making it difficult to gather meaningful data. The best practice is to start with broad audience segments and refine based on results, rather than starting with hyper-specific targeting.

Once your split test completes, Facebook provides results in two formats: a results email and performance data visible in the Ads Manager. The platform identifies a winning ad set based on the lowest cost per result and assigns a confidence level indicating the probability of achieving the same results if you ran the test again. Facebook typically declares a winner when results show 75% or higher confidence level, meaning there’s at least a 75% chance the same variation would win in a repeat test.

If your results show low confidence (below 75%), Facebook recommends running the test again with either a longer duration or higher budget to gather more data. This is particularly common when two variations perform very similarly, as the algorithm cannot definitively determine a winner. Once you have a clear winner, you have several options: pause underperforming variations and scale the winner, redistribute budget to allocate more spending to top performers while maintaining minimal budget for underperformers, or create a new campaign using the winning variation as your control for the next round of testing.

Sophisticated advertisers use a progressive testing methodology where they start with broad variations and gradually refine based on results. For example, you might first test two very different creative approaches (minimalist design versus feature-rich design) across a broad audience. Once you identify the winning creative direction, you then test variations within that winning approach (different colors, different headlines, different calls-to-action). This funnel approach maximizes learning while minimizing wasted budget on variations that don’t align with your audience’s preferences.

Another advanced strategy is continuous testing, where you maintain a small portion of your budget dedicated to testing new variations while the majority of your budget supports proven winners. This approach ensures you’re constantly discovering new opportunities for improvement while maintaining stable, predictable performance from your core campaigns. PostAffiliatePro’s advanced tracking capabilities make this strategy particularly effective, as you can monitor performance across multiple test variations and automatically allocate budget based on real-time performance data.

Facebook offers several testing methodologies beyond traditional split testing. Dynamic Creative optimization allows you to upload multiple creative assets and let Facebook’s machine learning algorithm automatically test combinations and serve the best-performing variations. This differs from split testing because Facebook controls the budget allocation and can shift resources to winning combinations in real-time. Dynamic Creative is ideal when you have many creative assets and want Facebook to optimize automatically, while split testing is better when you want precise control and scientific accuracy.

Brand Lift and Conversion Lift measurement tools provide more comprehensive insights than split testing by measuring the incremental impact of your ads compared to a control group that doesn’t see your ads. These tools are particularly valuable for large-budget campaigns where you want to understand not just which ad performs better, but how much of your business results are actually attributable to your advertising efforts. However, these tools require larger budgets and longer test periods than traditional split testing.

The ultimate goal of split testing is maximizing return on ad spend by continuously optimizing every element of your campaigns. Real-world case studies demonstrate the dramatic impact of split testing: one company reduced their cost per acquisition from $4,433 for a single sale to $123.45 per sale through systematic split testing of ad copy and creative, representing a 96.72% cost reduction. Another example showed a 100%+ improvement in cost per conversion simply by changing the ad image.

These results aren’t anomalies—they’re the expected outcome of disciplined, systematic split testing. By testing one variable at a time, gathering sufficient data, and acting on results, you can consistently improve campaign performance. When combined with PostAffiliatePro’s comprehensive tracking and analytics platform, split testing becomes even more powerful, as you can track performance across multiple campaigns, identify patterns in what works, and apply those learnings across your entire affiliate marketing portfolio.

Master split testing and affiliate campaign optimization with PostAffiliatePro's advanced tracking and analytics tools. Track performance across multiple ad variations and maximize your ROI with data-driven insights.

Learn how split testing works with our comprehensive guide. Discover the methodology, statistical significance, best practices, and how PostAffiliatePro helps o...

Split testing is a controlled experimentation method where different versions of a digital asset are presented to segments of an audience to determine which yie...

Discover why split testing is crucial for conversion optimization. Learn how A/B testing improves conversions, reduces risk, and drives ROI. PostAffiliatePro's ...